I prepared the following text as part of the process for my promotion to the rank of Full Professor at the Department of Computer Science of UFES.

1. From Childhood to Electronic Engineering at UFRJ (1974–1984)

During my childhood in Cachoeiro de Itapemirim – ES, I developed a great interest in understanding how everything around me worked (I believe this was influenced by my father's activities, as he was a watchmaker). This interest ranged from common technological artifacts, such as fluorescent lamps, to natural systems, such as the Sun (how could it generate so much energy, continuously, so stably and for so long?). Due to this interest, I became an avid reader and, thanks to Tecnirama: Encyclopedia of Science and Technology (Figure 1), I learned a great deal about almost everything that interested me at the time.

However, due to its broad scope, certain topics in Tecnirama were covered with insufficient depth to satisfy my interest. I highlight my interest in how the radio worked. How was it possible for sound generated in some distant place to reach my home through that device? Studying Tecnirama, I learned about various pieces of equipment involved in the process, such as microphones, loudspeakers, turntables, among others. But the vacuum tube radio (at the time, the active elements of radios were thermionic valves, Figure 2) was far too complex to be covered in Tecnirama with the depth I desired – I needed to learn more than what was available in it.

By chance, at 11 years of age (1974), I discovered the Instituto Rádio Técnico Monitor (now Instituto Monitor - http://www.institutomonitor.com.br), which at the time offered a technical course in "Radio, Television and Electronics" by correspondence (this teaching modality is now recognized as Distance Learning). When I turned 12 (1975), my father gave me the gift of funding this course (he passed away in a car accident before I completed the course) and, at 13 years of age, I already knew how radios, televisions, and various other electronic equipment worked (the quality of the course was excellent). Thanks to what I learned, I started working as an electronics technician in a large repair shop in Cachoeiro at age 14.

However, I soon discovered that I had learned how equipment worked, but not how to design them. This discovery came when I tried to design and build a radio transmitter, still at age 14. I succeeded, and my 5-Watt transmitter, based on the 50C5 vacuum tube (Figure 3), was capable of transmitting in AM (Amplitude Modulation), in the medium wave band (https://pt.wikipedia.org/wiki/R%C3%A1dio_AM 1 MHz), to a distance of about 200 meters – the distance from my house to the central square of the neighborhood where I lived.

To extend the range of the transmitter and reach the entire neighborhood, I built a 60-Watt amplifier for the transmitter, based on 2 6L6 vacuum tubes in a Class AB1 push-pull configuration (Figure 4). However, although I had more than tenfold increased the transmitter's power, the range did not exceed 200 meters.

Through the process of developing the amplifier and observing the results achieved with it, I realized that, although I knew how each part of the system worked, I did not know precisely how to design a radio frequency transmitter system – I had managed to design and build the transmitter and the amplifier, but I could not explain why the range had not increased. In other words, there was more to learn about electronics than what I had learned in my Radio, Television and Electronics course: how to design electronic equipment.

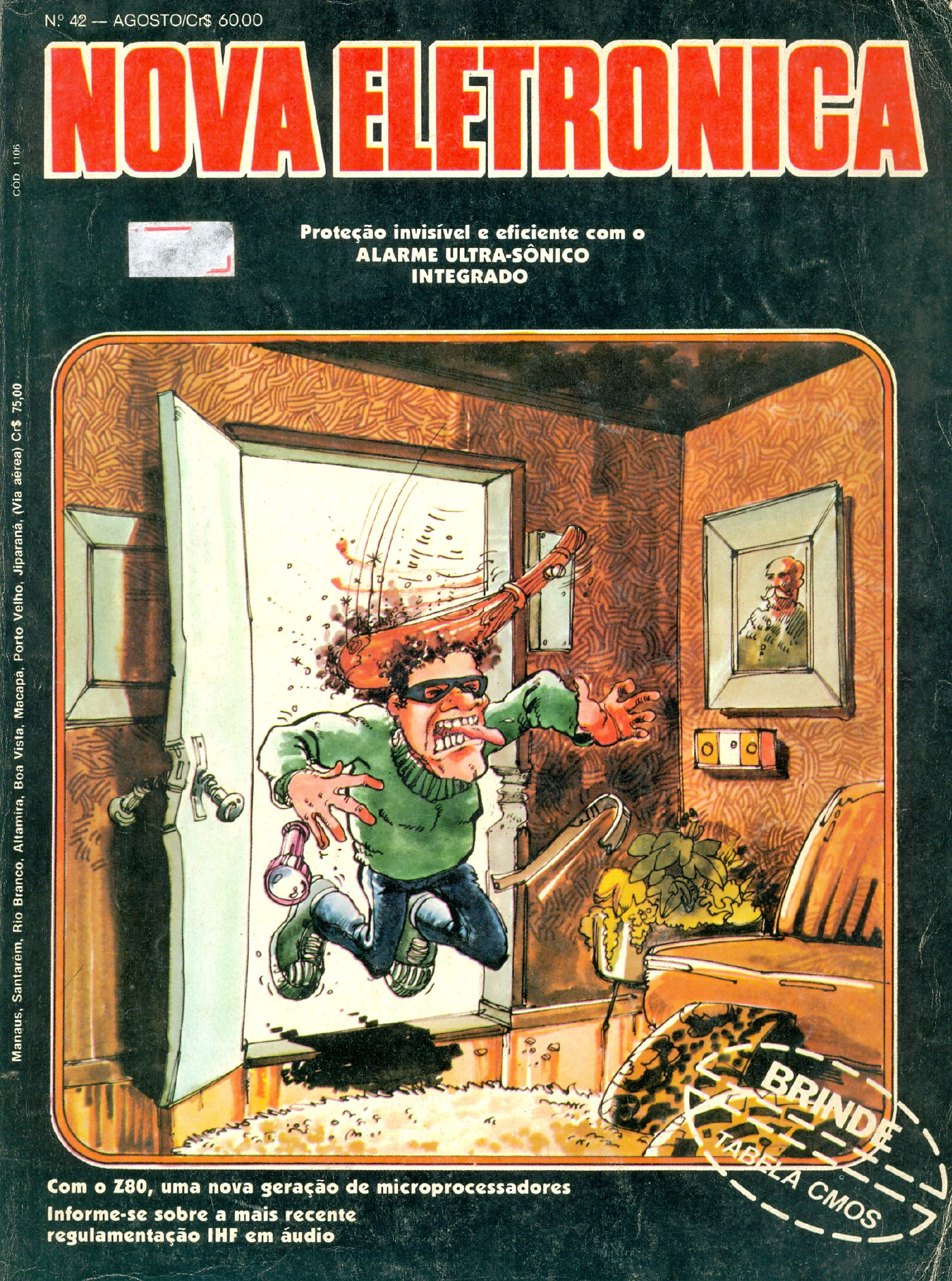

During this period, I also had my first contact with computing, through the magazine Nova Eletrônica, which in its first issue presented the first chapter of a Microcomputer Programming course (Nova Eletrônica no. 1, February 1977, Figure 5(a)). Due to the quality of its technical content, I began purchasing this magazine every month (it was monthly) and followed it until its last issue (no. 114, August 1986, Figure 5(b)).

Figure 5: First (a) and last (b) issues of the monthly magazine Nova Eletrônica

At 16 years of age (1979), I met in Vitória – ES an engineer with a degree in electronic engineering. He was my instructor in a course about a new color TV set from the electronics manufacturer Sharp, which was arriving on the Brazilian market (Sharp and the repair shop where I worked in Cachoeiro funded my participation in the course). During the course breaks, I was able to discuss with him the difficulties I had with my transmitter, developed years earlier. Through him, I learned what was taught in an electronic engineering program (calculus, physics, advanced electronic devices, digital electronics, etc.) and decided to become an engineer.

Still at age 16, I moved to the State of Rio de Janeiro to prepare for the university entrance exam (there was no, and still is no, electronic engineering program at UFES). I got a job as a technician at an electronics repair shop in Niterói – RJ and, at 18, I passed the entrance exam for the Telecommunications Engineering program at UFF. However, after one week of classes, I concluded that it was impossible to pursue an engineering degree as I intended and work at the same time. Thus, I decided to save enough money to go 5 years without working and, in this way, be able to dedicate myself full-time to the engineering program.

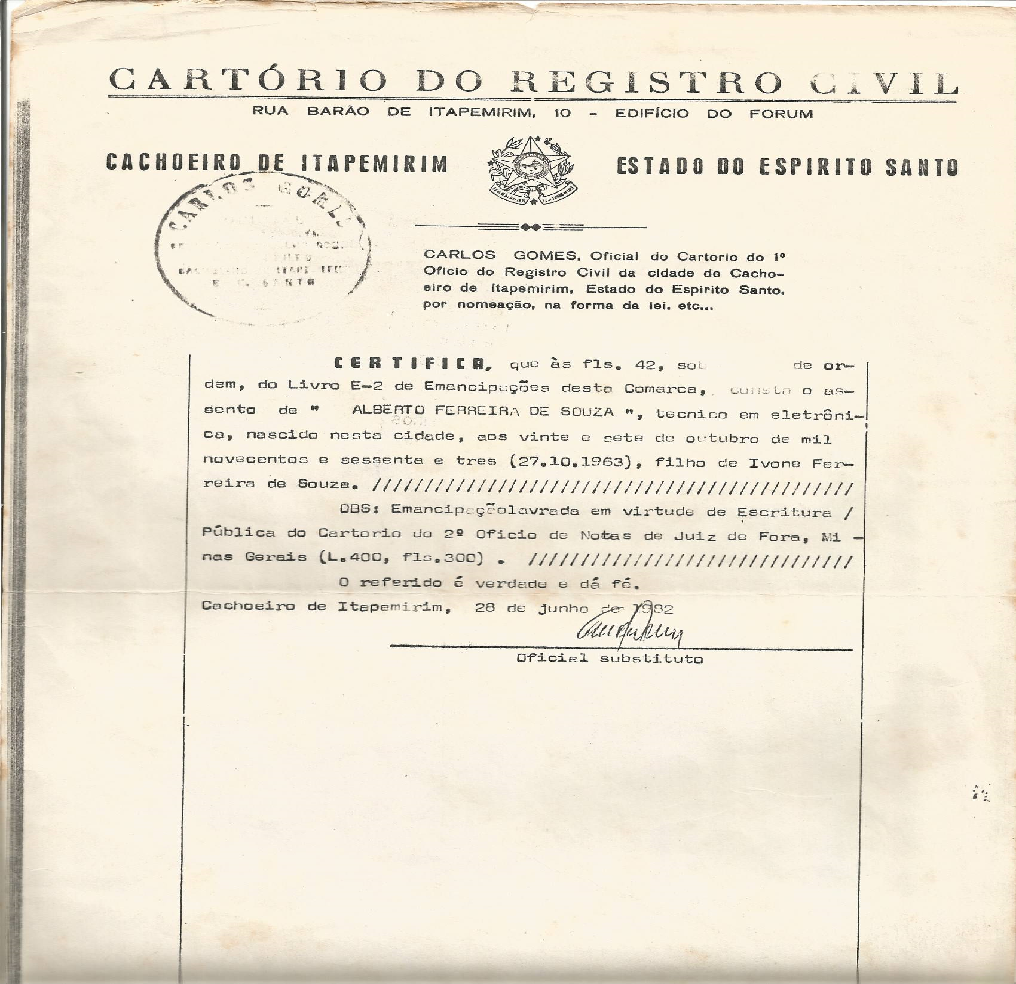

To obtain the resources necessary to go 5 years without working within a reasonable timeframe, I opened, together with a friend, a repair shop for naval electronic equipment, still at age 18 (it was necessary to officially emancipate myself to be a partner in the shop, since only those over 21 could be business owners at the time – Figure 6). Thanks to the high cost of the naval electronic equipment I repaired (Radars, Radio Direction Finders, Echo Sounders, etc.), in less than two years I had already amassed enough resources to complete my entire engineering degree without needing to work. However, my partner requested that I remain at the company for one more year for the benefit of the company and its team.

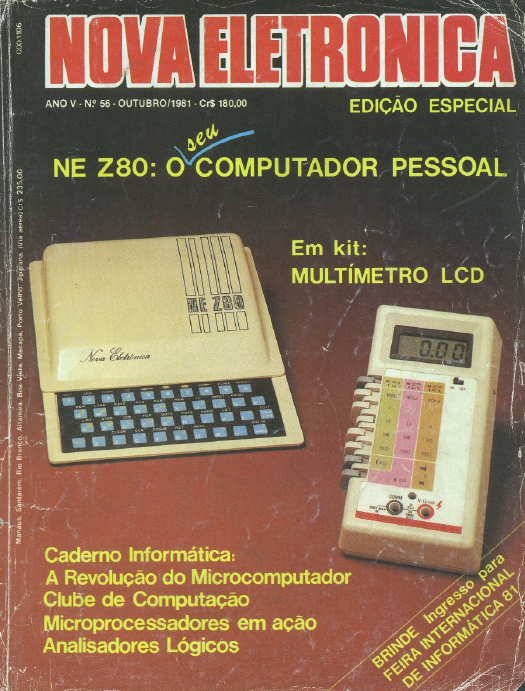

At that time, through the magazine Nova Eletrônica, I learned about the Z80 processor (Nova Eletrônica no. 42, August 1980, Figure 7(a)) and, later, through it I acquired my first computer – the NE-Z80 – which was based on this processor (no. 56, October 1981, Figure 7(b)). The NE-Z80 was sold as a component kit to assemble (http://www.mci.org.br/micro/prologica/nez80.html), meaning I assembled my first computer at age 18.

Figure 7: Covers of Nova Eletrônica magazine issues 42 (a) and 56 (b)

2. Electronic Engineering at UFRJ (1984–1988)

At 21 years of age (1984), I sold my share in the company, invested the proceeds in the financial market, and finally entered UFRJ to take the basic cycle of engineering courses (at the time, at UFRJ all engineering programs had a common basic cycle).

During the two years of UFRJ's engineering basic cycle, there were no electronics courses. Eager to learn electronics, I decided to visit the COPPE laboratories in search of learning opportunities. In this process, I met Professors Felipe Maia Galvão França (http://lattes.cnpq.br/1097952760431187, at the time a master's student) and Edil Severiano Tavares Fernandes (http://lattes.cnpq.br/4514999019313866, who passed away in October 2012), both from the Systems and Computer Engineering Program at COPPE. Felipe would become my final project advisor, and Edil, my Scientific Initiation advisor for several years and my master's thesis advisor.

Edil and Felipe granted me full access to the resources of the COPPE Systems and Computing Laboratory and encouraged my involvement in various research projects under development in the laboratory at the time. One of these projects consisted of transforming a Mitra-15 minicomputer from its original form, i.e., a microprogrammed machine (http://www.feb-patrimoine.com/english/mitra.htm), into a microprogrammable machine. This project resulted in my first national publication[1] and my second international journal publication[2]. Thanks to this and other work, while still an undergraduate, I was hired as a technician (programmer) by COPPE/UFRJ (in 1986).

My first international journal publication was the result of my final project work[3]. Titled "The Hardware of a Hybrid Parallel Machine," this work involved the design and implementation of the MPH (Máquina Paralela Híbrida – Hybrid Parallel Machine), perhaps the first Brazilian parallel computer. The MPH was a multiprocessor system organized according to a hypercubic topology and employed an interprocessor communication mechanism based on shared memory – each of its 16 nodes had four memory regions shared with four neighbors, enabling the implementation of a degree-4 hypercube (https://en.wikipedia.org/wiki/Tesseract). The processors used were 6809s (used at the time in the TRS-80 microcomputer https://en.wikipedia.org/wiki/TRS-80_Color_Computer). This project also resulted in my second national publication[4] and in the first-place award at the VII Scientific Initiation Works Competition (CTIC) of the Brazilian Computer Society (SBC), Figure 8.

With the knowledge acquired in the Electronic Engineering program at UFRJ, I learned to design not only analog electronic equipment, such as amplifiers, power supplies, and radio equipment, but also digital electronic systems such as the MPH. But something that made me particularly happy about what I learned during the program was discovering why my 60-Watt AM transmitter had not achieved a range greater than 200 meters.

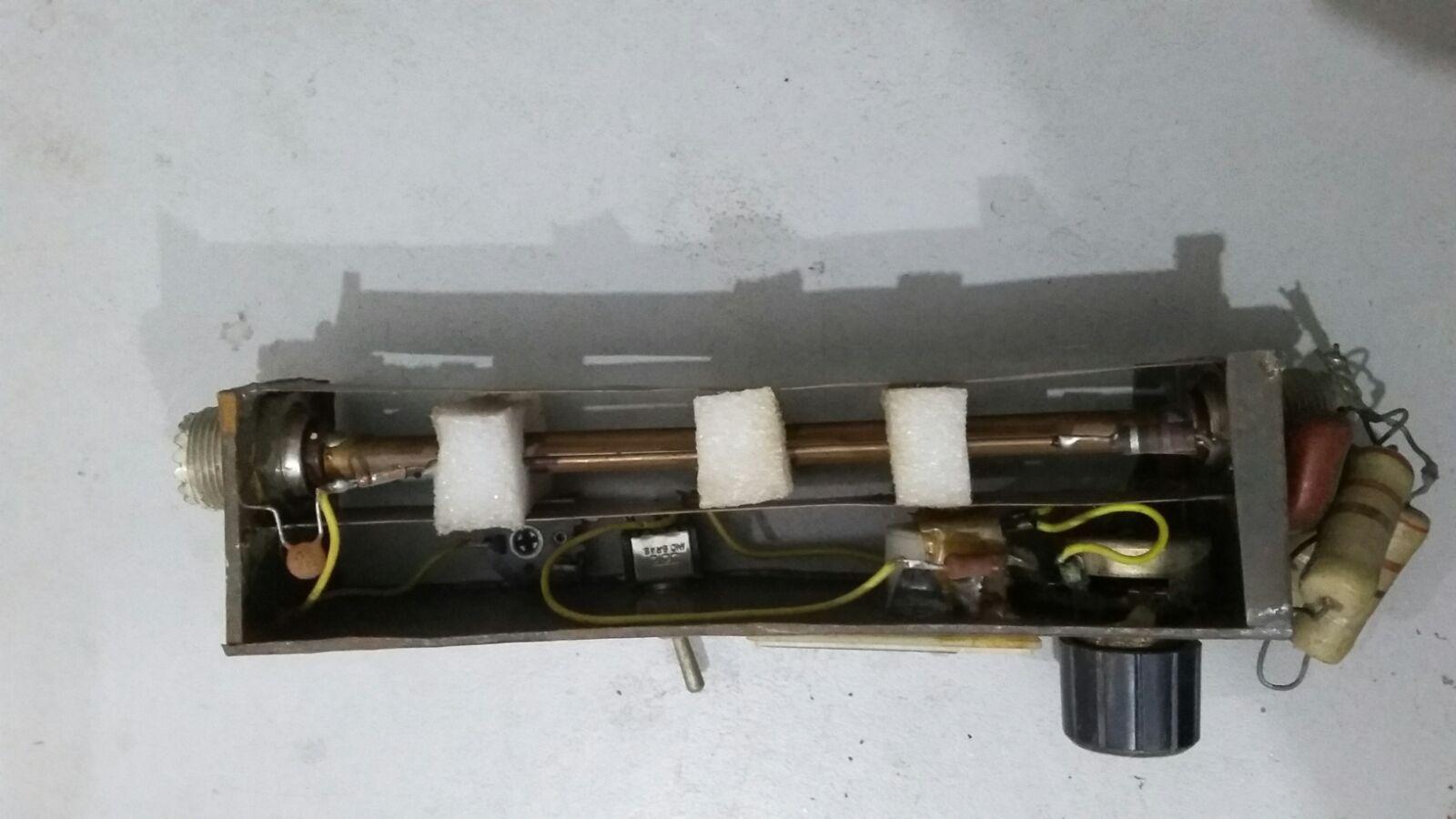

Near the end of the program, I had the opportunity to build a 30-Watt FM transmitter (Figure 9). By then, I had already learned that, to be properly propagated, radio signals require an antenna: (i) of the correct size for the transmitter's operating frequency, (ii) of the correct impedance for the transmission line used, and (iii) of the correct type for the desired radiation pattern. In the case of my FM transmitter, the operating frequency was 100 MHz and the desired radiation pattern was omnidirectional. For omnidirectional transmission at this frequency, a vertical half-wave dipole antenna can be used (https://en.wikipedia.org/wiki/Dipole_antenna), which can be adjusted to have an impedance of approximately 50 Ohms (http://coral.ufsm.br/gpscom/professores/andrei/Semfio/cap6tulo%204.pdf), enabling the use of 50-Ohm coaxial cables (widely available and low-cost both now and at the time) as the transmission line. The wavelength at this frequency is 3 meters; therefore, a vertical half-wave dipole for this frequency is 1.5 meters long – something easy to build. In fact, my 30-Watt FM transmitter connected to a 1.5-meter dipole transmitted over a distance of more than 10 km with good signal quality (from Block H of the UFRJ Technology Center to Candelária, in downtown Rio de Janeiro). To achieve the same with my AM transmitter, a 150-meter dipole would have been required (the wavelength at 1 MHz is 300 meters)!

I completed my Electronic Engineering degree at UFRJ in August 1988, one semester ahead of schedule (I completed the program in 4.5 years). Thanks to my performance in the program, I received the Academic Distinction cum laude (with honors) from UFRJ.

Figure 9: (a) From left to right: oscillator module, FM modulator and Radio Frequency (RF) pre-amplifier; Standing Wave Ratio (SWR – https://pt.wikipedia.org/wiki/Rela%C3%A7%C3%A3o_de_ondas_estacion%C3%A1rias) meter module, necessary to calibrate the antenna size according to the transmitter's operating frequency and the impedance of the transmission line carrying the RF signal to the antenna, thus optimizing the amount of transmitted energy; RF power amplifier module. (b) Internal view of the oscillator module, FM modulator and RF pre-amplifier. (c) Internal view of the SWR meter. (d) Internal view of the RF power amplifier. Recent photographs.

3. Master's Degree at COPPE/UFRJ (1989–1992)

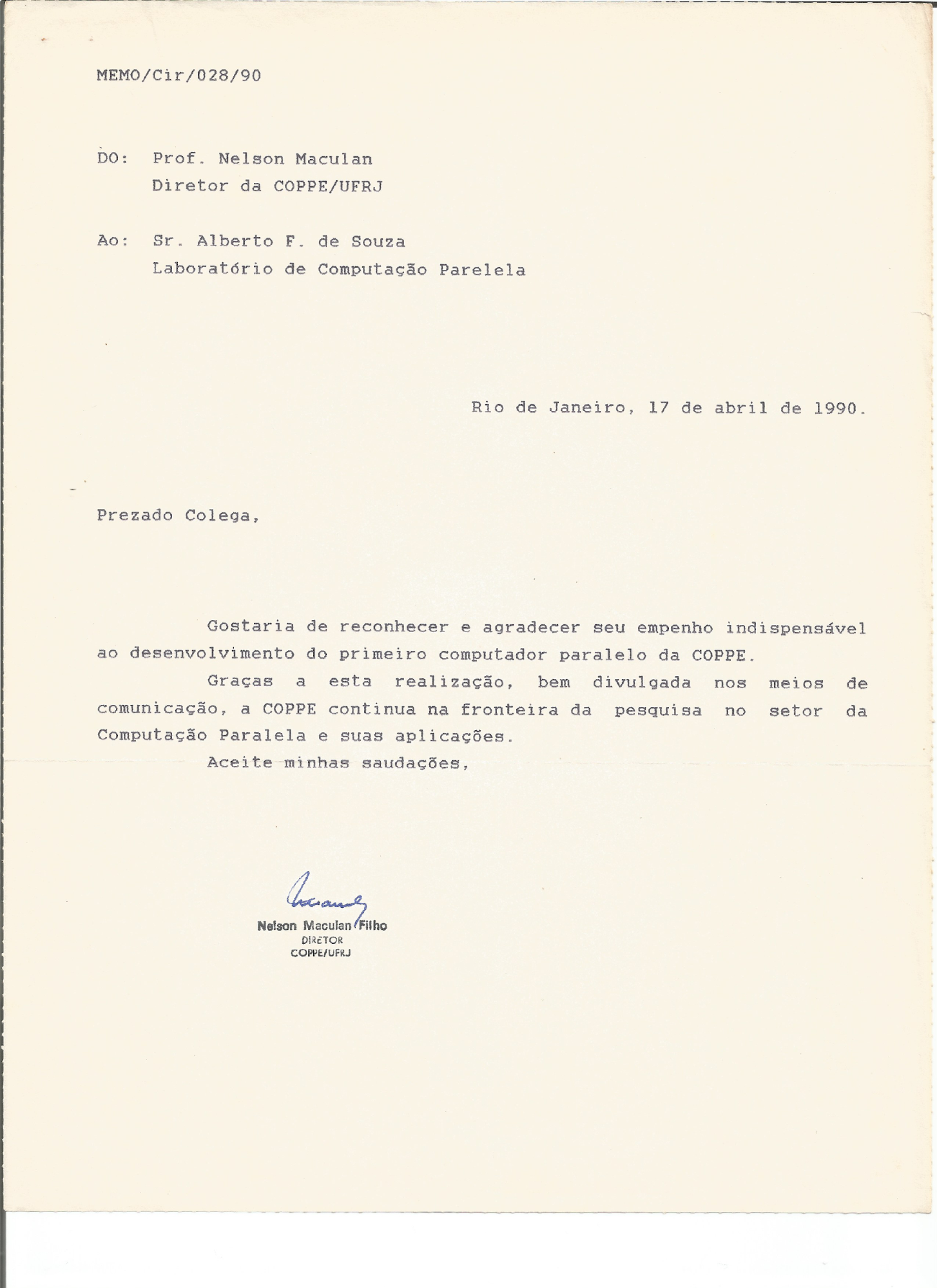

As soon as I completed my undergraduate degree, in 1988, I was promoted to researcher by COPPE/UFRJ and, in 1989, I began my master's program under the supervision of Professor Edil S. T. Fernandes. In parallel with my master's studies, I worked at COPPE as one of the designers of Brazil's first parallel supercomputer, the NCP-I[5]. The NCP-I project was coordinated by COPPE Systems Professor Claudio Luis de Amorim and funded by FINEP.

By 1990, the electronic design of the NCP-I was completed and some of its nodes were already assembled and in operation (Figure 10). I had already written a good portion of my master's thesis, had been accepted for doctoral studies at 7 universities in the United Kingdom (positively influenced by Professors Edil and Felipe, I had decided to pursue my doctorate in the United Kingdom), and had my scholarship applications for doctoral studies abroad approved by both CNPq and CAPES. However, with President Collor's rise to power, a large part of the NCP-I project's funding was cut. The manner in which the cuts occurred and the general situation of the country under Collor's presidency led me to believe that the future of scientific research in Brazil was permanently compromised. Faced with this scenario, I decided to abandon the academic career and move to Vitória – ES, where I founded a new electronics repair shop: Rock's Eletrônica (Figure 11).

Rock's Eletrônica was a great success and, within two years of operation, already had 2 branches (in the cities of Vila Velha and Linhares). However, I did not lose contact with the friends I had made at COPPE. One of them, Professor Francisco Negreiros Gomes, who was a professor at UFES and was pursuing his doctorate at COPPE during the period when I was an undergraduate, insisted that I finish my master's degree and apply for a position at UFES every time we met. In early 1993, I took his advice (Collor had already been impeached), and I completed and defended my master's thesis.

In my master's research, I investigated the instruction-level parallelism (Instruction-Level Parallelism – ILP) available in real benchmark programs of the time that could be exploited by machines with very long instruction words (Very Long Instruction Word – VLIW machines). Additionally, I investigated how VLIW machines should be balanced (the appropriate number of functional units, registers, memory access ports, etc.) to take advantage of this ILP[6],[7].

Having completed my master's degree, I applied for a professorial position at the Department of Computer Science of UFES.

4. Early Career at UFES and Doctoral Studies (1993–1999)

On September 2, 1993, I assumed the position of Professor at the Department of Informatics (DI) of UFES and stepped away from the management of Rock's Eletrônica. At the beginning of 1994, I was appointed as the DI representative in the Collegiate of the Computer Engineering Program at UFES and was elected program coordinator.

Created in 1990, the Computer Engineering Program had not yet graduated its first class and, at the time, there were doubts at CREA-ES about how the graduates would be registered there, since Computer Engineering programs were a novelty in the country. Due to this issue, I studied what the profile of a Computer Engineer appropriate for the time should be and brought the knowledge about what I learned [8] to CREA-ES. This proximity with CREA-ES led the Technology Center of UFES, which houses the Department of Informatics and all the engineering departments of the university's main campus, to nominate me as UFES's representative on the CREA-ES Council in 1995. My involvement at CREA-ES, not only in defending the Computer Engineering field but also in debating various relevant issues for the engineering field in Espírito Santo at the time, resulted in an invitation to join the board of the organization as its First Secretary Director, Member of the Budget and Procurement Committee, and Member of the Informatics Committee.

5. The Doctoral Period at University College London (1996–1999)

At the end of 1995 and beginning of 1996, I left the Board of CREA-ES and the Coordination of the Computer Engineering Program to prepare for my doctoral studies. Once again, I was accepted for doctoral studies at several universities in the United Kingdom and had my applications for doctoral fellowships abroad accepted by both CNPq and CAPES. I chose to pursue my doctorate at University College London (UCL) with a CAPES fellowship and began the program in September 1996. Before going to the United Kingdom, I sold all my shares in Rock's Eletrônica and its branches.

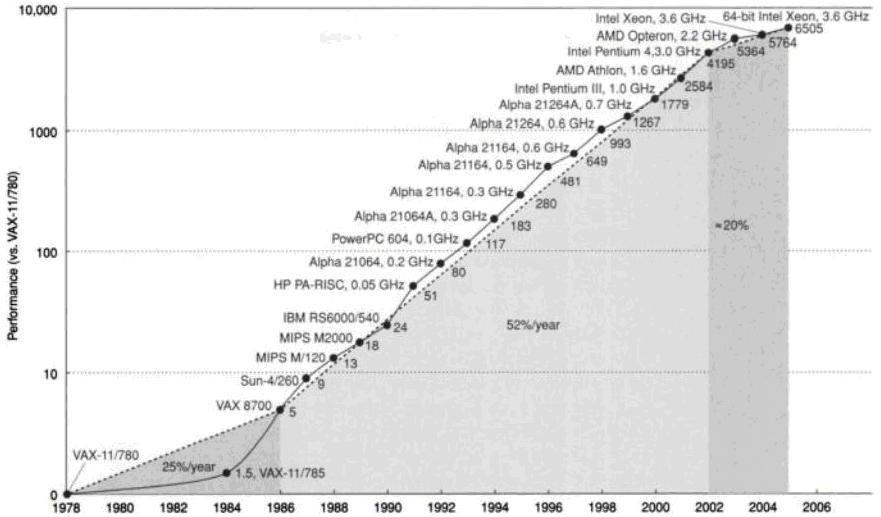

During my doctorate at UCL, I studied the dominant microarchitecture of processors at the time, i.e., the Superscalar architecture, and, in particular, the difficulties associated with exploiting ILP in these architectures. The main difficulty was the complexity of Superscalar machines, which grows exponentially with only a linear increase in ILP exploitation capability.

In Superscalar machines, instructions are continuously fetched from the instruction cache and placed in an instruction window where, at each machine cycle, several of them are analyzed (to identify which can be executed in parallel), selected, and dispatched for parallel execution dynamically. Dynamic instruction scheduling hardware is used to perform the analysis, selection, and dispatch of instructions for execution. The complexity of this hardware grows exponentially with the size of the instruction window because, during the dynamic scheduling process, each additional instruction accommodated in the window needs to be compared with all other instructions in the window and all instructions currently in execution.

Influenced by what I had learned about VLIW architectures during my master's studies, after my initial studies on Superscalar architectures I began to reflect on architectural alternatives for ILP exploitation based on the VLIW concept.

The main disadvantage of VLIW machines is the code incompatibility between different generations of the same architecture (lack of backward code compatibility), resulting from the static scheduling of VLIW instructions performed by the compiler for a specific VLIW hardware. My doctoral thesis was the proposal of the Dynamically Trace Scheduled VLIW (DTSVLIW) architecture [9], [10], which solves the backward code compatibility problem of VLIW machines.

5.1. The DTSVLIW Architecture

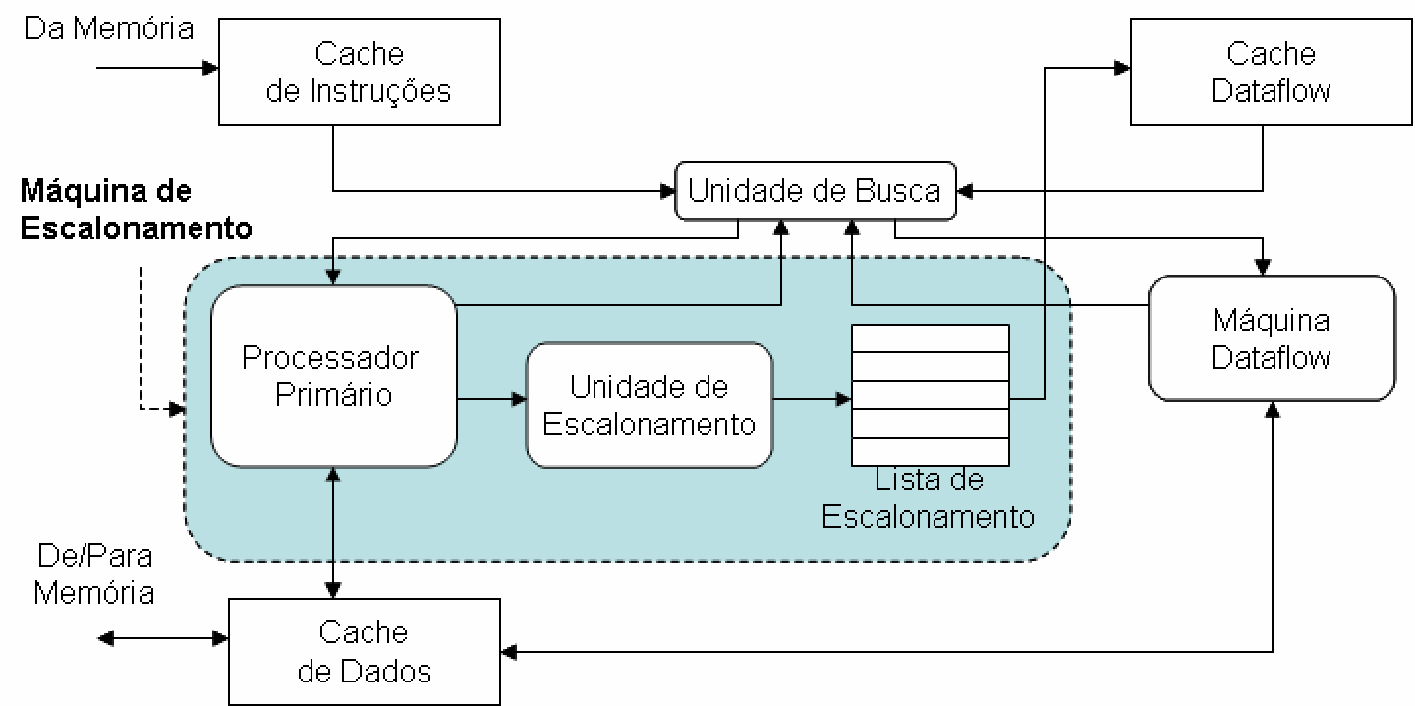

Figure 12 shows a block diagram of the DTSVLIW architecture. In a DTSVLIW processor, the Scheduler Engine fetches instructions from the Instruction Cache and executes them for the first time using a simple pipelined processor — the Primary Processor. Furthermore, its Scheduler Unit dynamically schedules the instruction sequence (trace) produced during execution on the Primary Processor into VLIW instructions, groups these VLIW instructions into blocks, and saves these blocks in the VLIW Cache. If the same code is executed again, it is fetched from the VLIW Cache by the VLIW Engine and executed in VLIW parallel mode. Although the code must initially be executed sequentially, experiments showed that a DTSVLIW machine parameterized according to the technology available in 2003 spends more than 95% of cycles executing parallel VLIW code [11].

In 1999, Professor Francisco Negreiros passed away in a car accident. The event led me to reflect on the plans we had made together for the Department of Informatics and the Graduate Program in Informatics at UFES. With my thesis already defined and the sense of urgency brought by such reflections, I decided to complete my doctorate in 3 years instead of the originally planned 4, and return to Brazil.

6. Return to Brazil — University Administration (1999–2013)

My doctoral period abroad and my debates with Professor Francisco Negreiros made me clearly see the precarious conditions for conducting research activities at the Department of Informatics of UFES in 1999. The DI (i) did not have sufficient physical space for research activities (especially laboratory space), (ii) did not have adequate hardware for research, and (iii) did not have the bibliographic resources to keep up with the state of the art in the department's areas of interest (or any other area).

My involvement in proposing and organizing debates within the department to address these problems led to my election as Head of the DI in January 2000. As Head, I began working with the Departmental Council of the Technology Center (CT) of UFES to show the other department heads and the Center's administration the needs of the DI. The DI had particularities as it had been the last department created in the Center; especially because it was created partly by a group of professors from another Center (professors from the Department of Mathematics of the Center for Exact Sciences), and partly by a group of professors from the CT. However, many of the difficulties faced by the DI were also faced by other departments in the CT. My involvement in debates about how to best address these difficulties led to my candidacy as Vice-Director of the CT in the year 2000 election for the CT's leadership. Still in 2000, I was elected Vice-Director of the CT.

As Vice-Director, I actively participated in various debates and actions that led to the improvement of conditions for teaching, research, and extension activities at the DI in particular and the CT in general. I highlight my work in coordinating the CT Physical Space Committee and coordinating the Committee for the Creation of the CT Branch Library. Thanks to the work of the Physical Space Committee, guidelines were established and actions were taken that resulted in a significant expansion of the CT's physical space, with the construction of the CT-IX building (designated for the DI) and, subsequently, the CT-X (Production Engineering) and CT-XII (available to all CT programs) buildings, among others. Thanks to the work of the Committee for the Creation of the CT Branch Library, the Technology Branch Library was created and today houses a collection of specific interest to the CT, particularly the CT's Graduate Programs.

My role as Vice-Director of the CT led to my nomination and subsequent appointment by the UFES Rector to the position of Superintendent Director of the UFES Institute of Technology (ITUFES) in June 2001 (a position I held concurrently with the Vice-Director position of the CT until September 2002). As Superintendent Director of ITUFES, I worked to ensure its continued existence, which was threatened, and to strengthen it. I also worked to strengthen the Espírito Santo Foundation for Technology (FEST), where I served as Vice-President of the Board of Directors and where I continue to serve as a member of the Board of Directors to this day. ITUFES and FEST play an important role today for the CT, UFES, and society at large in their areas of activity.

In August 2003, I was appointed to the position of Pro-Rector for Planning and Institutional Development at UFES and left the Vice-Directorship of the CT. With the election of a new Rector at the end of 2003, I left the Pro-Rector position in January 2004. During this period as Pro-Rector for Planning and Institutional Development, I coordinated the initial implementation process of the Pro-Rectorate for Planning and Institutional Development (PROPLAN). PROPLAN did not exist before this period; it was (re)created and I assumed the role as its first Pro-Rector, having carried out activities to coordinate the adaptation of its initial provisional operating location and the development of its first work plan.

In September 2004, I was reappointed by the new UFES Rector to the position of Pro-Rector for Planning and Institutional Development and remained Pro-Rector until January 2008. During this period as Pro-Rector for Planning and Institutional Development, I led the process of establishing PROPLAN at its current operating location. I coordinated the formation of its administrative staff and the drafting of the ordinances that structured it institutionally. Having given PROPLAN the form it retains to this day, I initiated the process of defining guidelines for carrying out strategic planning at UFES and subsequently coordinated the execution of the university's first Strategic Plan [12]. After the planning cycle was completed, I coordinated the implementation of the University's strategic management mechanisms, based on monitoring the planned actions. I worked, in accordance with the 2005–2010 Strategic Plan, on the Expansion of UFES's Interiorization and on coordinating the preparation of UFES's REUNI Project.

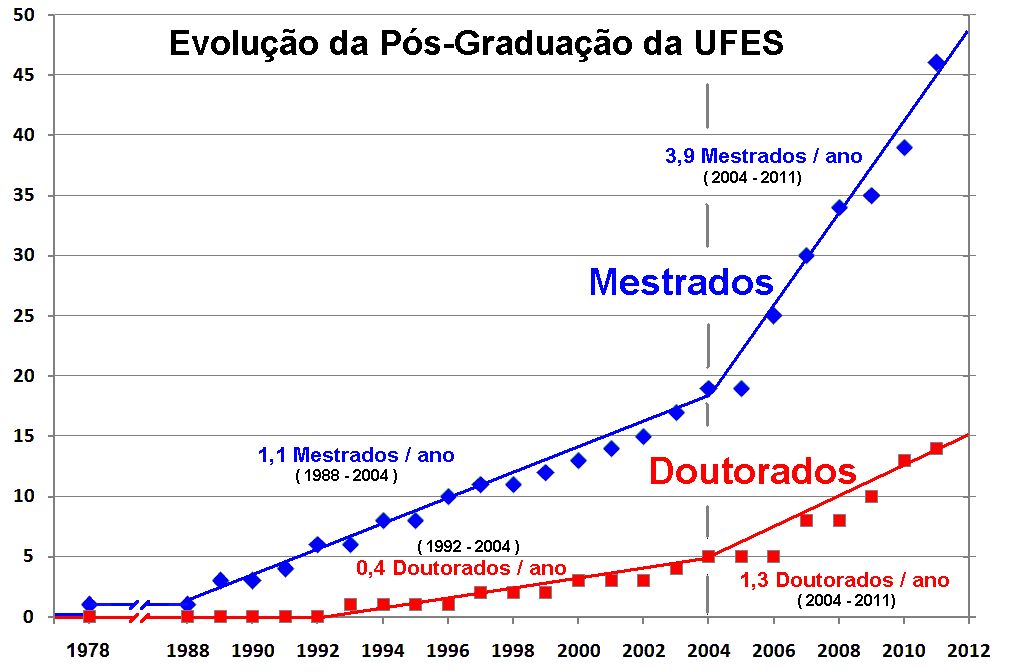

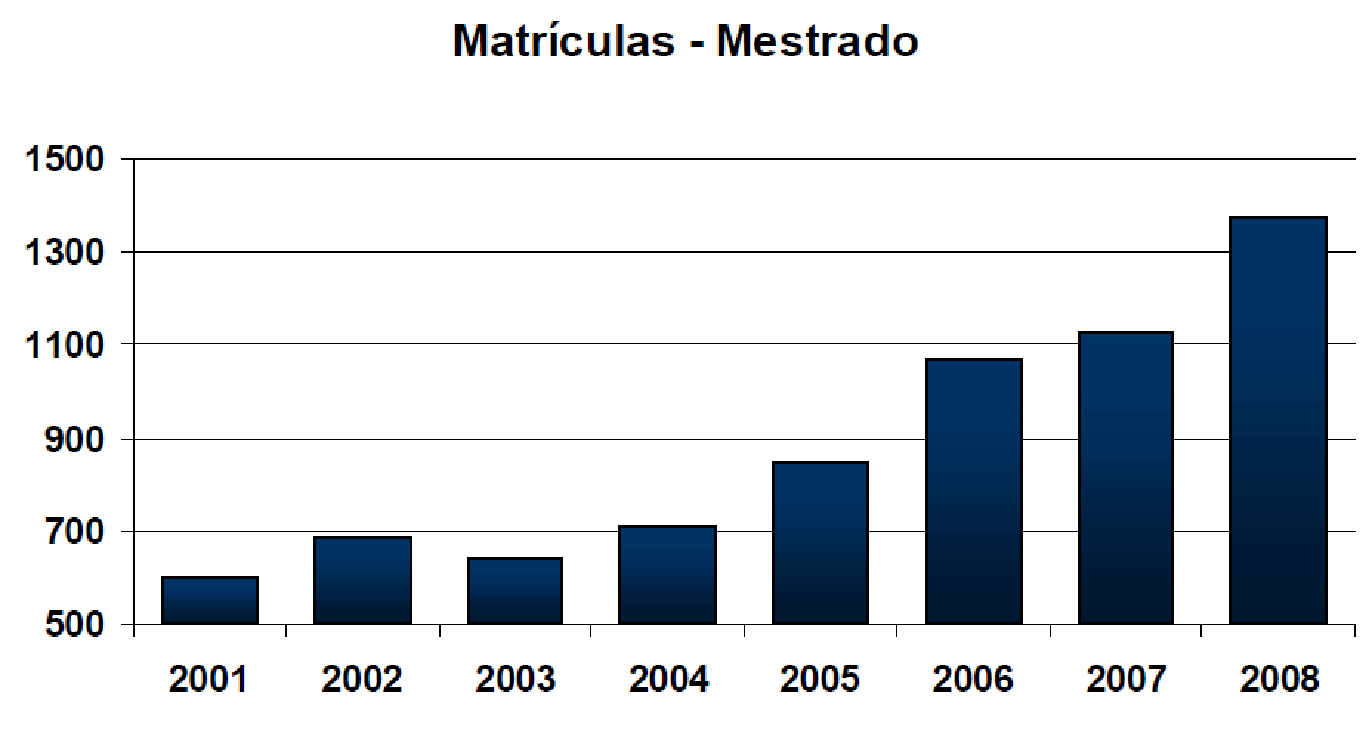

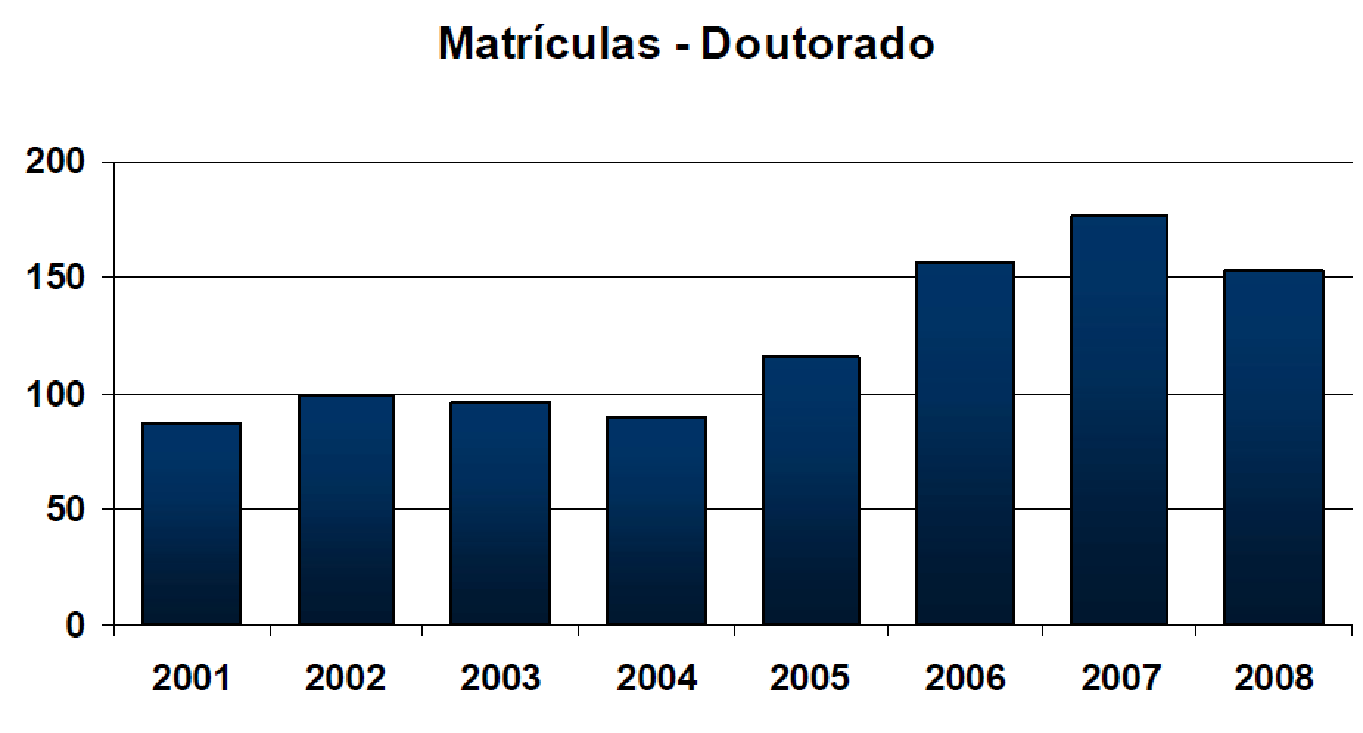

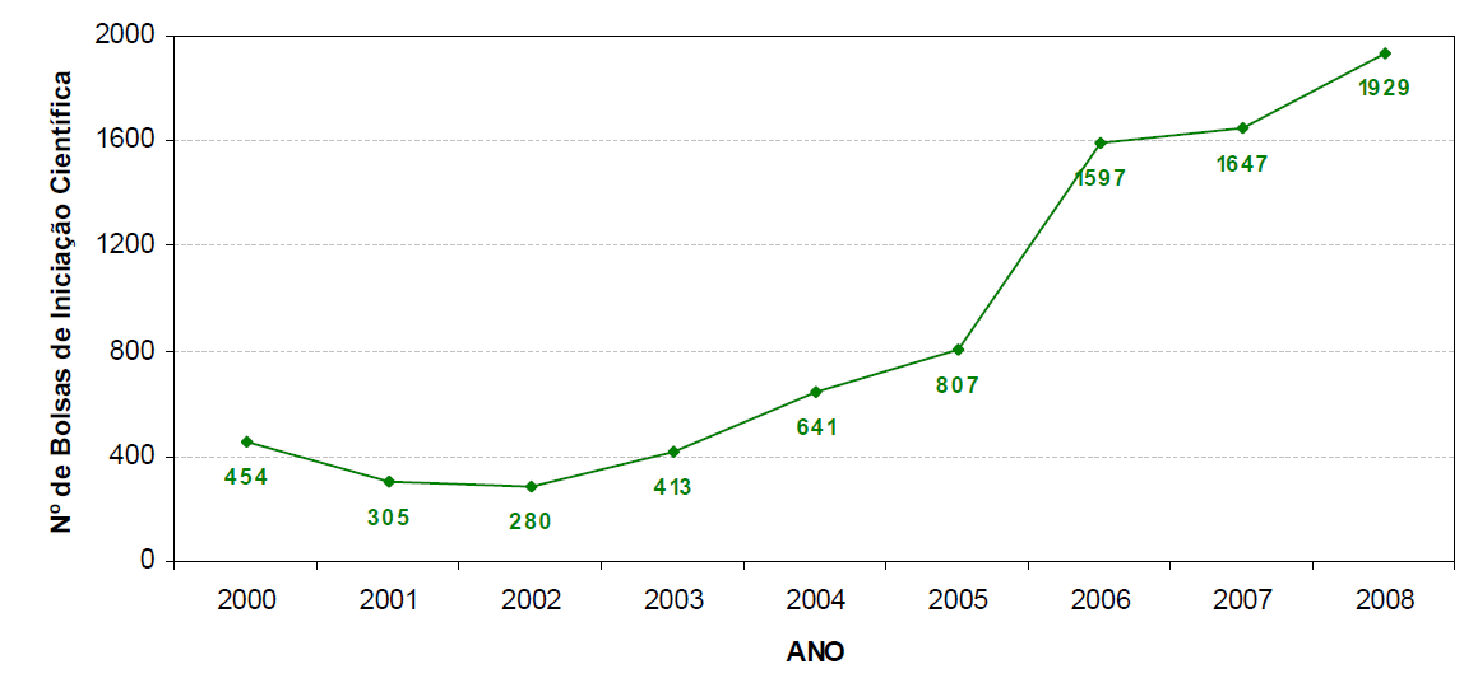

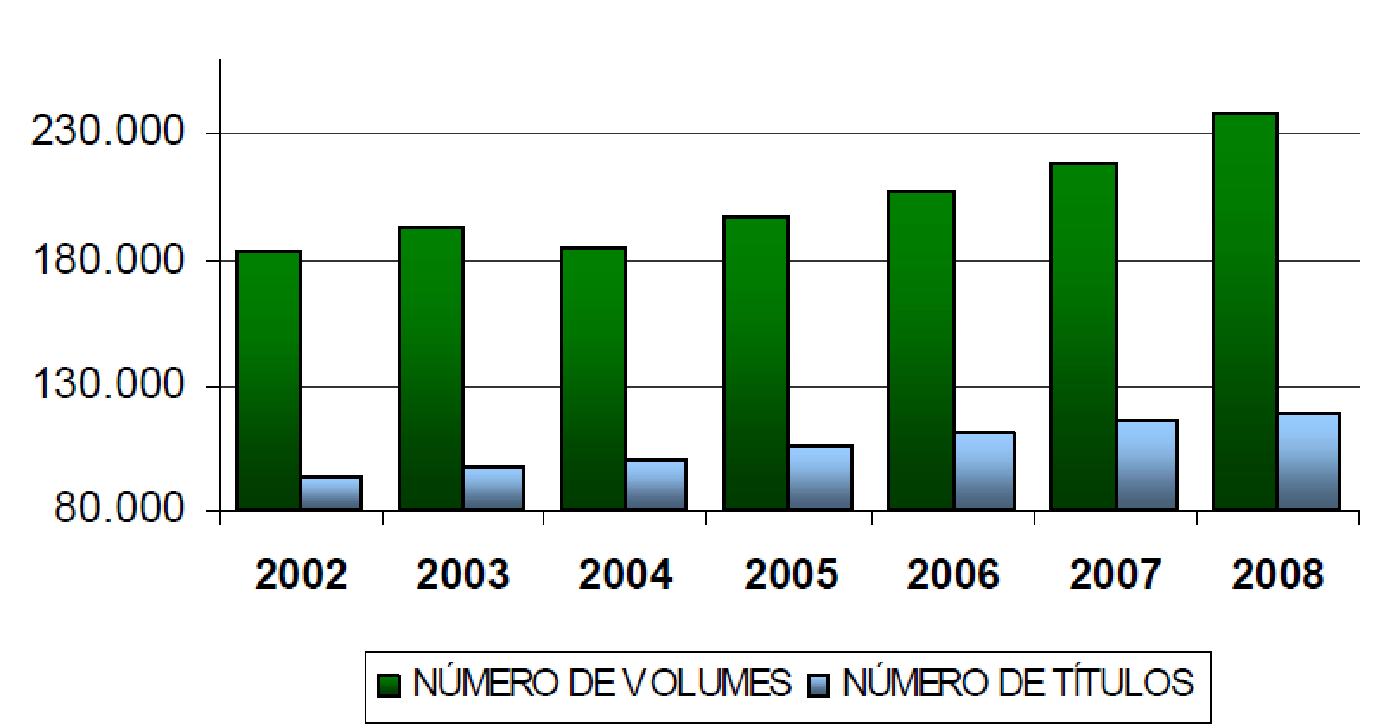

Our work leading PROPLAN, in partnership with the other Pro-Rectors and with the support of the Rectorate, contributed to the realization of most of what was planned during my tenure as Pro-Rector. Among the various achievements foreseen in the plan and accomplished by the institution, I highlight the evolution of graduate studies at UFES, with a visible change in the rate of increase in the number of master's and doctoral programs at UFES during the period I served as Pro-Rector (2004 to 2007, Figure 13), as well as the evolution in graduate enrollment numbers (Figure 14 and Figure 15), the number of undergraduate research fellowships (Figure 16), and the growth of the UFES library system's collection (Figure 17). It is worth highlighting the work of Professor Francisco Guilherme Emmerich, Pro-Rector for Research and Graduate Studies, in achieving these results during the period I was Pro-Rector for Planning and also in the following 4 years.

After I left PROPLAN, I dedicated myself to creating the Doctoral Program in Computer Science at UFES. As a member of the Graduate Program in Informatics (PPGI) at UFES, I proposed and was elected coordinator of the Committee to Develop a Project and Define Strategic Actions Aimed at Creating the Doctoral Program in Computer Science at UFES (CDOC) in June 2008. In April 2009, the project was completed and submitted to CAPES — at the time, PPGI still held a grade of 3 from CAPES. In August 2009, I was elected Coordinator of PPGI and, by the time I left the program's coordination in July 2011, the Doctoral Program in Computer Science had already been approved (in April 2010) and PPGI held a grade of 4 from CAPES.

In April 2012, I was appointed Director of Research at UFES, a position I held until March 2013. During the period I held the position of Director of Research, I dedicated myself to strengthening UFES's Scientific Initiation Conference and to creating PRPPG programs foreseen in UFES's Strategic Plan, such as the UFES Research Support Fund.

At the end of this period of more than 10 years in university administration (1999–2013), UFES in general and the DI in particular had advanced significantly and, today, the DI (i) has physical space for research activities, especially laboratory space, (ii) has adequate hardware for research, and (iii) has bibliographic resources to keep up with the state of the art in the department's areas of interest. I feel gratified for having contributed directly or indirectly to the achievement of these advances.

7. Return to Brazil — Research, Teaching, and Extension (1999–2015)

Throughout the entire period I was involved in UFES administration, I never ceased developing research, teaching, and extension activities. As soon as I returned from my doctorate (in 1999), however, the great challenge was the lack of appropriate infrastructure for research, especially for the main research topic I was working on at the time — processor microarchitecture.

Research in the area of processor microarchitecture demands a large amount of computational resources for simulating the microarchitectures under investigation. Due to the need for computational resources to conduct experiments, I joined other colleagues from the DI and founded a High-Performance Computing research group (originally at http://dgp.cnpq.br/dgp/espelhogrupo/5883089247558529, now at http://dgp.cnpq.br/dgp/espelhogrupo/2805153253373085). The group's efforts resulted in obtaining funding from the National Petroleum Agency to build a 65-processor cluster. The Enterprise Cluster [13] (Figure 18) was completed in 2003 and, as soon as it became operational in January 2003, it ranked 48th on the list of the world's most powerful clusters.

Also in 2003, my first CNPq Research Productivity Fellowship project, titled "Advanced Processor Architectures," was approved. From this project onward and up to the present day, my research, extension, and graduate teaching and undergraduate research activities have been guided by my CNPq Research Productivity (PQ) projects.

7.1. PQ Project 2003–2004 — Advanced Processor Architectures

In the years 2003 and 2004, we carried out research, teaching, and extension activities that enabled: (i) theoretical advances in the area of computer architecture and high-performance computing; (ii) theoretical advances in the area of artificial visual cognition; (iii) the development of technology that resulted in the implementation of a product prototype and two patents; (iv) the consolidation of our local research group, with four doctoral-level researchers, one doctoral student, and several master's and undergraduate students; and (v) the improvement of our group's research infrastructure, with the creation of a laboratory and implementation of a 65-processor cluster.

7.1.1. Theoretical Advances in Computer Architecture and High-Performance Computing

7.1.1.1. Energy Consumption and Heat Dissipation

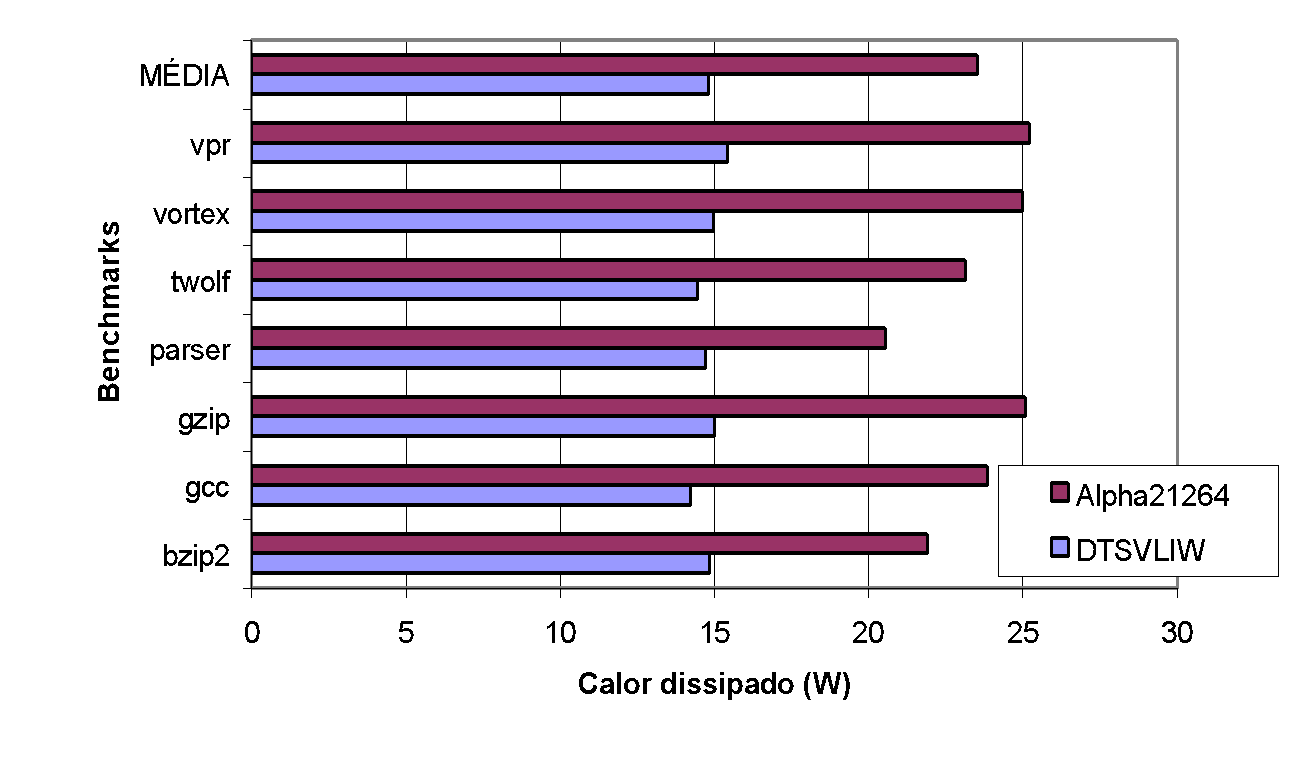

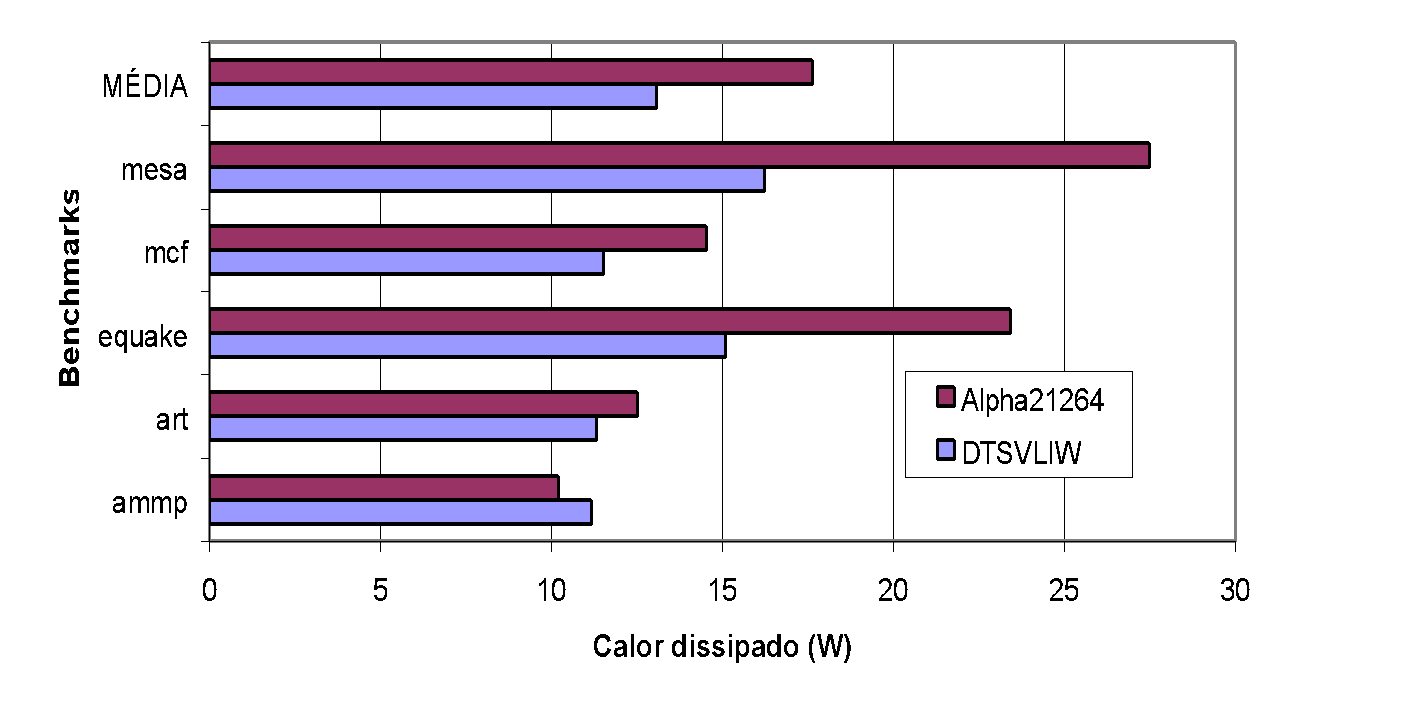

In this research work, we experimentally analyzed the dynamic and static energy consumption of the DTSVLIW architecture [14]. Dynamic energy consumption results from the charging and discharging of capacitors formed by: (i) the interconnections between the various hardware structures existing in the processor, and (ii) the various parts of the CMOS transistors that implement these structures. Static consumption, in turn, results from the leakage current of the CMOS transistors. Nearly all the energy consumed by processors is transformed into heat, and this heat must be dissipated; otherwise, the temperature increase resulting from the failure to dissipate the heat can damage the processors. To analyze energy consumption and dissipated heat separately, we first measured the heat dissipated during the execution of test programs, in Watts, and then the energy spent per instruction, in Joules/instruction, during the execution of these programs.

To carry out the experiments, we implemented a version of our DTSVLIW simulator with consumption meters. To place the results of the study in the context of processors existing at the time of the project, we compared the energy consumption of a hypothetical implementation of the DTSVLIW architecture with hardware equivalent to the Alpha 21264 processor [15]. To evaluate the dynamic energy consumption of the Alpha 21264 processor, we used the Wattch simulator [16]; while for evaluating static consumption, we used the Hotleakage simulator [17], both publicly available. Both our DTSVLIW simulator and the Wattch and Hotleakage simulators are based on the SimpleScalar-3.0 simulator (www.simplescalar.com), which enabled an appropriate comparison between the experimental measurements of the two architectures (DTSVLIW and Superscalar).

Both simulators used (DTSVLIW and Alpha 21264) interpret executable programs produced by standard compilers that generate code for the Alpha Instruction Set Architecture (ISA). In the experiments, we used a subset of the SPEC2000 executable suite (www.specbench.org) provided with the SimpleScalar simulator. These executables were produced on an Alpha 21264 machine running the Digital UNIX V4.0F operating system, and were compiled by the DEC C V5.9-008 compiler (Rev. 1229), or by the Compaq C++ V6.2-024 compiler (Rev. 1229), or by the Compaq Fortran V5.3-915 compiler (f77 and f90). As inputs for the SPEC2000 programs, we used the input set developed at the University of Minnesota [18]. With this input set, the SPEC2000 programs selected by the researchers at that university require only a few billion instructions for their execution (approximately one second of processing on an Alpha machine of that era), but this number of instructions is sufficient to capture the processor's performance when executing these programs18.

We used four types of fabrication technology in the experiments: 70nm, 100nm, 130nm, and 180nm. However, since the results obtained for dynamic consumption and dissipation vary only by a scaling factor from one technology to another, we present here only the results for the 180nm technology (this was the technology employed in the fabrication of the 800MHz Alpha 21264). We used a supply voltage of 2V, a working frequency of 1GHz, and a CMOS transistor threshold voltage of 0.55V1414.

A strategy used to reduce energy consumption and heat dissipation in processors is conditional clocking, where functional units not used in a cycle do not receive a clock pulse. To model the conditional clocking technique, the consumption and dissipation of each hardware unit in our simulators were scaled linearly with the number of accesses to them — that is, if in a given cycle a unit is accessed, its full consumption is accounted for; otherwise, it is not. However, we added consumption equivalent to 10% of the maximum consumption of each unit when the unit is not accessed, a percentage typically used by the industry [16].

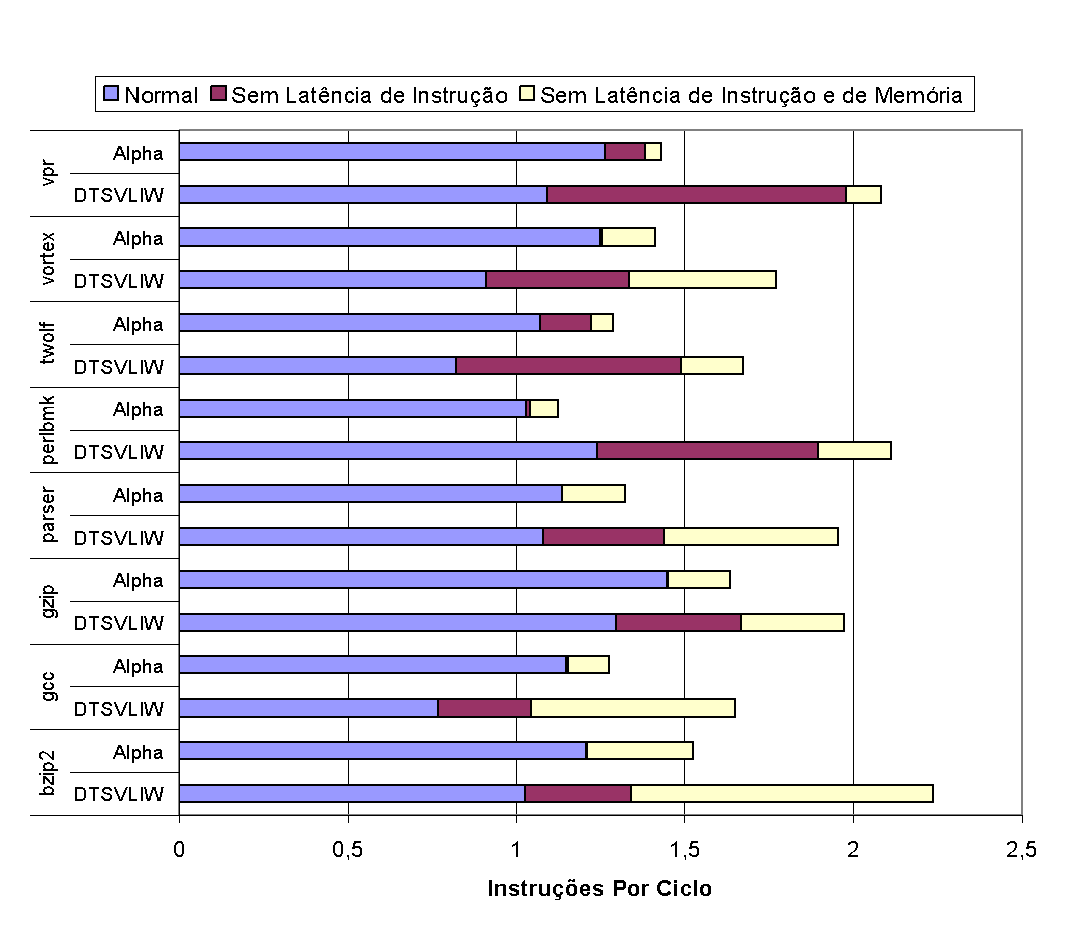

Figure 19 and Figure 20 below present graphs with the dynamic heat dissipation measured in our experiments with integer and floating point programs from SPEC2000, respectively.

As the graphs in Figure 19 and Figure 20 show, the heat dissipated by the DTSVLIW processor is significantly lower than that dissipated by the Alpha 21264 for both integer and floating point programs. The heat dissipated by the DTSVLIW is lower than the Alpha 21264 for all integer programs, with an average of 14.80W compared to 23.54W for the Alpha 21264 — that is, 37.12% less dissipation on average. For floating point programs, the heat dissipated by the DTSVLIW is significantly lower than the Alpha 21264 for the mesa and equake programs, but this difference is less pronounced in the cases of mcf, art, and ammp, and in the case of the latter, the Alpha 21264 outperforms the DTSVLIW (we will discuss these cases further ahead). However, on average, the DTSVLIW dissipates 13.06W compared to 17.63W for the Alpha 21264 — that is, the amount of heat dissipated by the DTSVLIW in floating point programs was 25.92% lower than the amount dissipated by the Alpha 2126414.

The DTSVLIW architecture dissipates less heat because it executes programs in two modes: a scalar mode, when, in addition to executing code on the Primary Processor, its Scheduling Unit schedules and saves the code in the form of VLIW instructions; and a VLIW mode, when the VLIW instructions are executed on the VLIW Machine. The DTSVLIW executes code in VLIW mode during most clock cycles; thus, its scheduling unit does not receive clock pulses and its heat dissipation is greatly reduced in this mode. The Superscalar architecture of the Alpha 21264, on the other hand, schedules code in every cycle to extract its ILP; therefore, the scheduling hardware of this processor must receive clock pulses during virtually the entire execution. In addition to receiving clock pulses virtually all the time, the scheduling hardware must receive the results produced by the functional units and use them to enable new instructions awaiting results to be dispatched for execution. That is, the three parts of the Superscalar scheduling hardware produce the observed heat dissipation differences, namely: (i) the logic for dispatching instructions coming from the fetch stages to the reservation stations, (ii) the logic used to propagate results to the reservation stations and enable ready instructions, and (iii) the logic for issuing these ready instructions from the reservation stations to the functional units.

As can be seen in the graph of Figure 20, in the case of the mcf, art, and ammp programs, the dissipation of the DTSVLIW processor approaches that of the Alpha 21264, and in the case of the ammp program, the dissipation of the Alpha 21264 is lower than that of the DTSVLIW. To understand these results, it is necessary to examine the effect of memory hierarchy latency on the performance of these processors.

In Figure 21 and Figure 22, we present the results of experiments conducted to examine the impact of instruction and memory hierarchy latencies on the performance (in terms of instructions executed per cycle) of DTSVLIW and Superscalar processors [19]. For each horizontal bar in Figure 21 and Figure 22, the length of the first segment (from left to right) indicates the performance obtained by the machine for each program considering normal latencies, the width of the bar up to the far right of the second segment indicates what the machine's performance would be if the latency of all instructions were equal to 1, and the width of the entire bar indicates what the machine's performance would be with instruction latency equal to 1 and perfect caches.

As the graphs in Figure 21 and Figure 22 show, considering all latencies, the Alpha machine has higher performance than the DTSVLIW in all integer programs except perlbmk, while the opposite occurs with floating point programs, where the DTSVLIW machine has better performance than the Alpha in all programs except art and mcf. On average (harmonic mean19), the Alpha exhibits 18.4% higher performance than the DTSVLIW for the integer programs tested and 8.3% for the floating point programs. However, it is important to note that, when instruction latencies are disregarded in both machines, the DTSVLIW surpasses the Alpha in integer programs by a wide margin and achieves the same average performance in floating point programs. That is, the Superscalar architecture demonstrated greater ability to minimize the impact of instruction latencies, which was expected. The performance measured without considering instruction and memory latencies shows the DTSVLIW architecture further ahead of the Superscalar: the DTSVLIW surpasses the Superscalar by a wide margin in all programs. This was expected, given that the DTSVLIW's Scheduling List has the capacity to accommodate more instructions than the Superscalar's issue queues19.

The heat dissipation of the DTSVLIW processor approaches that of the Alpha 21264 in the floating point programs mcf, art, and ammp because, in these programs, both processors spend most machine cycles waiting for memory accesses. Since their hardware is equivalent, their consumption when idle is practically the same.

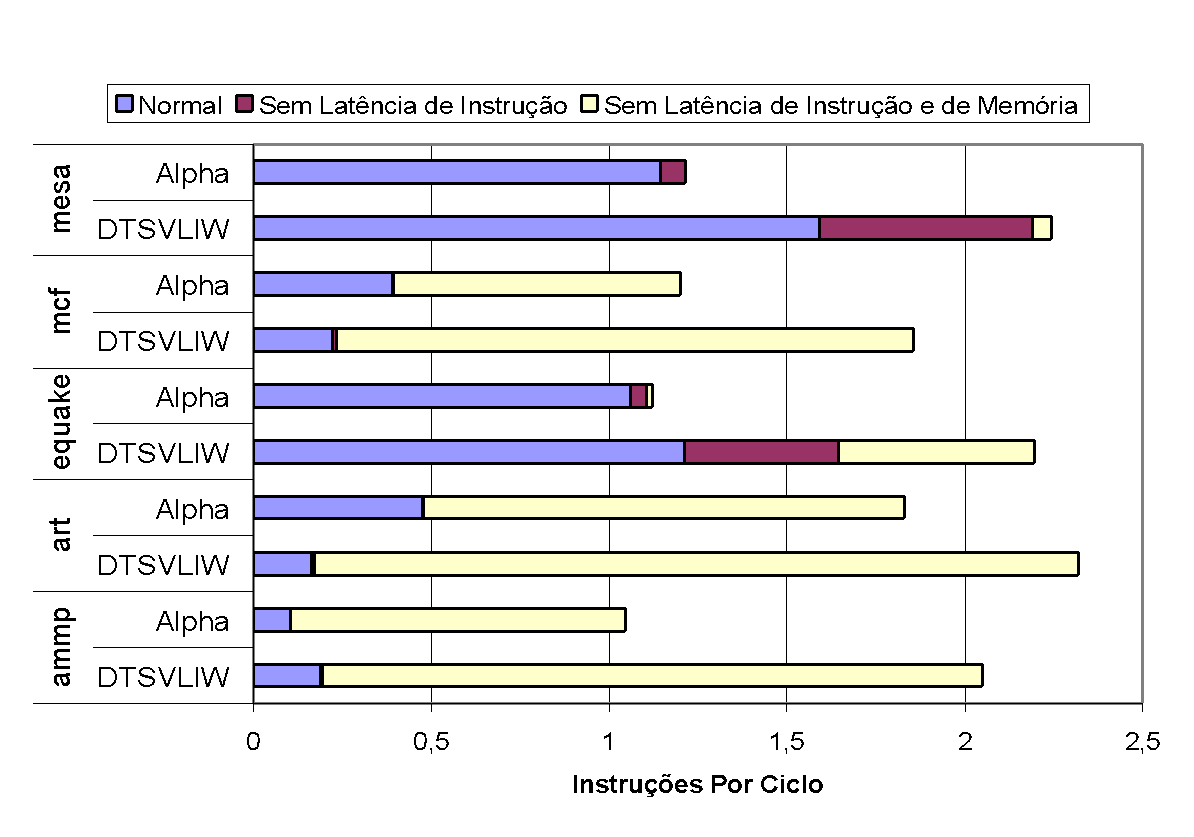

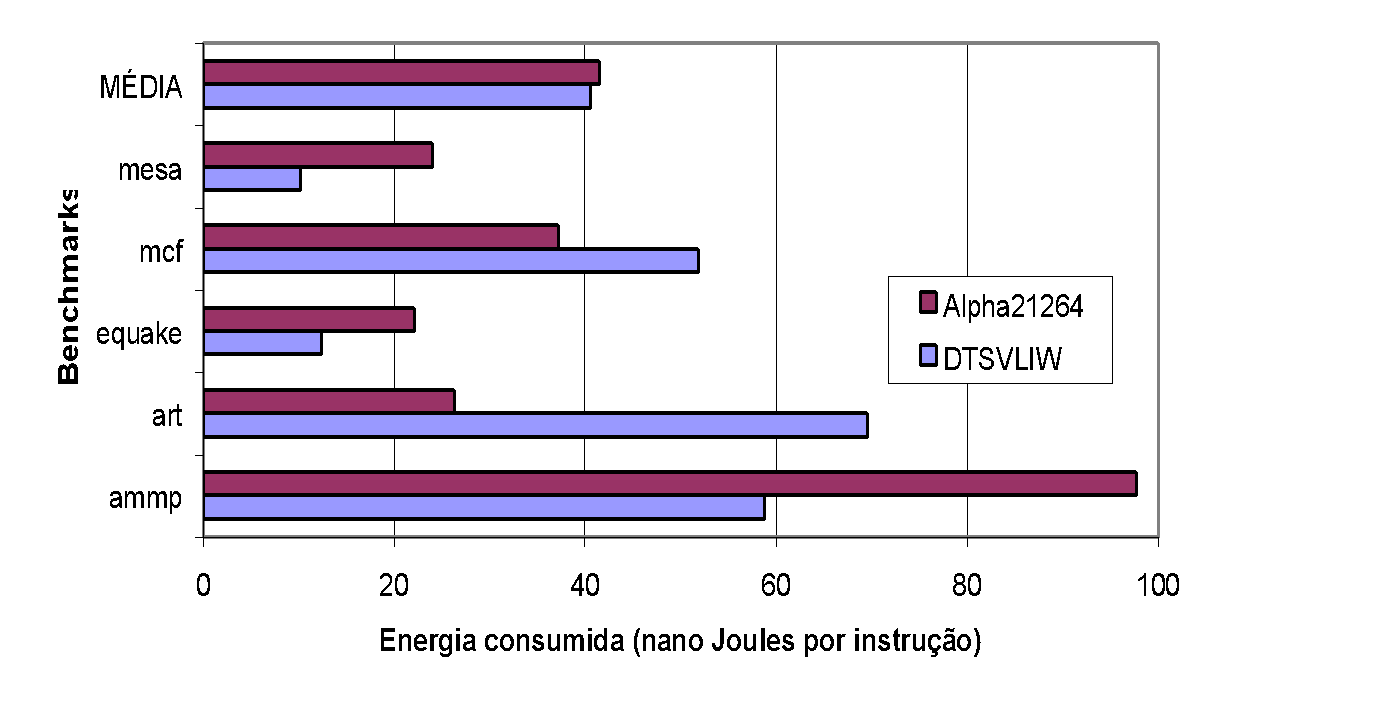

7.1.1.2. Energy Consumption

We used the energy dissipation in the form of heat presented in Figure 19 and Figure 20, together with the performance obtained in the experiments summarized in Figure 21 and Figure 22, to compute the energy consumption, in terms of Joules per instruction, of the processors under study. To do this, we divided the values in Watts (Joules/second) from Figure 19 and Figure 20 by the values in instructions per cycle from Figure 19 and Figure 20 (instructions per nanosecond, in fact, since the clock frequency used in the experiments is 1GHz), and obtained the graphs in Figure 23 and Figure 24, which show the energy consumption of each processor in nanoJoules per instruction. In the calculations, we used the length of the first segment of the graphs in Figure 19 and Figure 20.

As can be seen in the graph of Figure 23, the energy consumption per instruction executed on the DTSVLIW processor is lower than on the Alpha 21264 processor for all integer programs tested — on average 26.07% lower. For the case of floating point programs, the DTSVLIW processor consumes less energy per instruction executed than the Alpha 21264 in the mesa, equake, and ammp programs, while the Alpha 21264 processor consumes less than the DTSVLIW in mcf and art (Figure 24). The difference in energy consumption per instruction is smaller in the case of floating point programs, but still favors the DTSVLIW, which consumes on average 2.15% less energy per instruction than the Alpha 21264.

The pattern of energy consumption per instruction in floating point programs observed in the two processors is quite distinct from that observed in integer programs. While in integer programs the DTSVLIW architecture produces better results in all cases, in floating point programs the Superscalar architecture of the Alpha 21264 presents better results by a wide margin in two cases. This can also be understood by analyzing the impact of memory latency on the performance of each of these architectures.

As can be seen in the graph of Figure 22, for the mcf, art, and ammp programs, the DTSVLIW and the Alpha 21264 have their performance strongly affected by the memory hierarchy latency. Both machines spend most clock cycles waiting for data to be read from or written to memory rather than using their hardware to execute instructions. Under these circumstances, their energy consumption is mainly associated with the consumption of functional units and caches when they are not receiving clock pulses (10% of maximum consumption). But this energy is spent without performing useful work and, for this reason, the energy cost per useful instruction is high in the case of these three programs.

The analysis of heat dissipation, performance, and energy consumption of the two architectures in the case of the ammp program illustrates well the interrelationships between the variables under study. Examining the graph of Figure 20, it is possible to verify that, when executing this program (and in our experiments only this program), the DTSVLIW dissipates more heat than the Alpha. But, observing the performance of the two architectures for this program in Figure 22, we see that the DTSVLIW performance is almost double that of the Alpha. This explains why the energy consumed per instruction during DTSVLIW execution of this program is much lower than during Alpha execution, as can be verified in Figure 24.

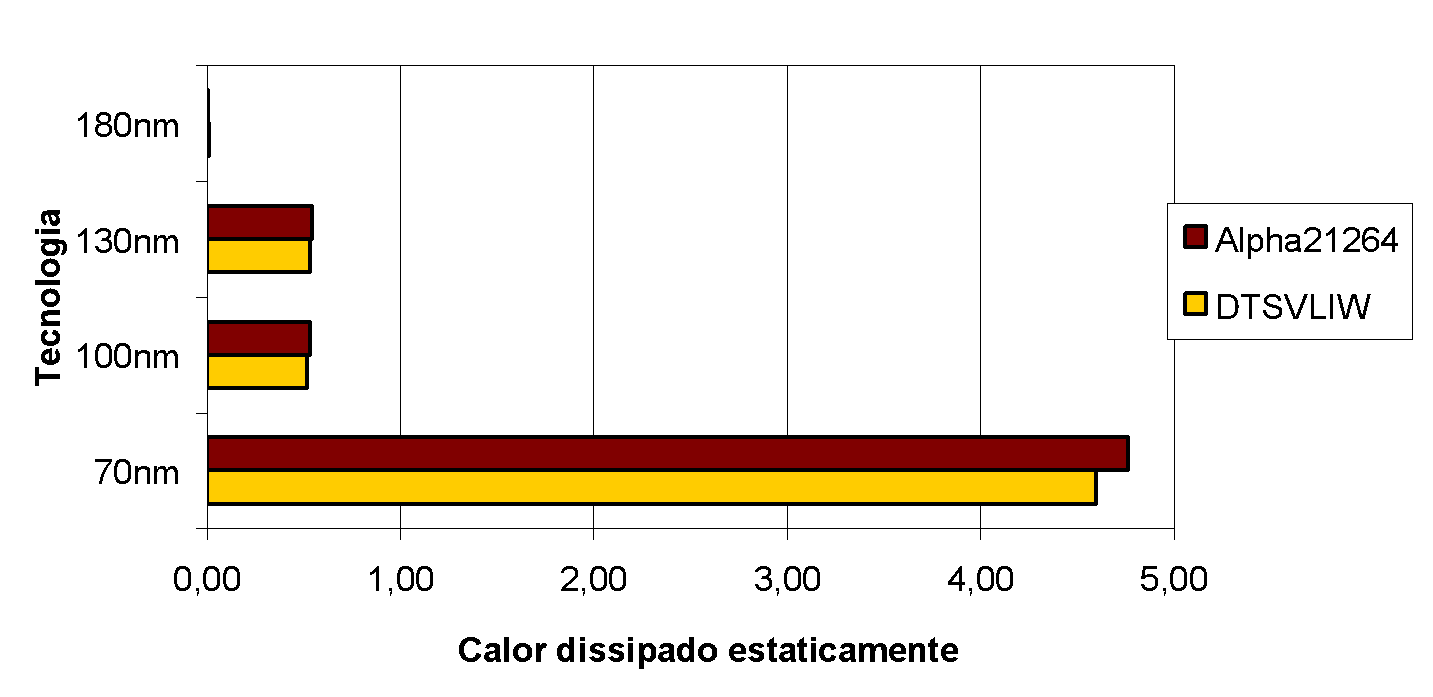

7.1.1.3. Statically Dissipated Heat

The graph in Figure 25 presents the statically dissipated heat in the two processors studied for the technologies used in the experiments. It shows that static heat dissipation is very small in both processors for an implementation with 180nm technology, but begins to become significant starting from 70nm. The amount of statically dissipated heat in the DTSVLIW and Alpha 21264 processors modeled is, however, very close, as can be seen in Figure 25, since the hardware of the two processors was made as equivalent as possible14.

In 2004, the trend for the future was for static heat dissipation to surpass dynamic dissipation [20]. However, various chip implementation techniques have been and are being studied to limit static heat dissipation, such as high-k dielectrics20 and carbon nanotube-based transistors [21], for example.

7.1.1.4. Impact of Memory Latency on DTSVLIW Performance

In Figure 21 and Figure 22, the results of a study conducted to examine the impact of instruction and memory hierarchy latencies on DTSVLIW performance were presented19. This study showed that the main advantage of the DTSVLIW architecture over the Superscalar is the simplicity and effectiveness of its instruction scheduler. It also showed that the main disadvantage of the DTSVLIW relative to the Superscalar is its limited ability to inhibit the negative effect imposed by main memory access latency on performance19. In seeking to reduce the impact of memory hierarchy latency on DTSVLIW performance, we developed a version of this architecture with multiple execution contexts implemented in hardware [22]. A machine with multiple contexts implemented in hardware, or multithreaded [23],[24], has two or more replicas of the internal structures (basically registers) responsible for storing the machine state (context). Thus, the machine can switch from executing one program to executing another very quickly. With this capability, upon detecting a cache miss that forces a main memory access, the machine could switch the running program in the hope of finding this other program in a condition to execute useful instructions.

Our results showed that the use of multiple execution contexts implemented in hardware can be an alternative to mitigate the negative effect of memory hierarchy latency on DTSVLIW performance22. Figure 26 and Figure 27 below show these results.

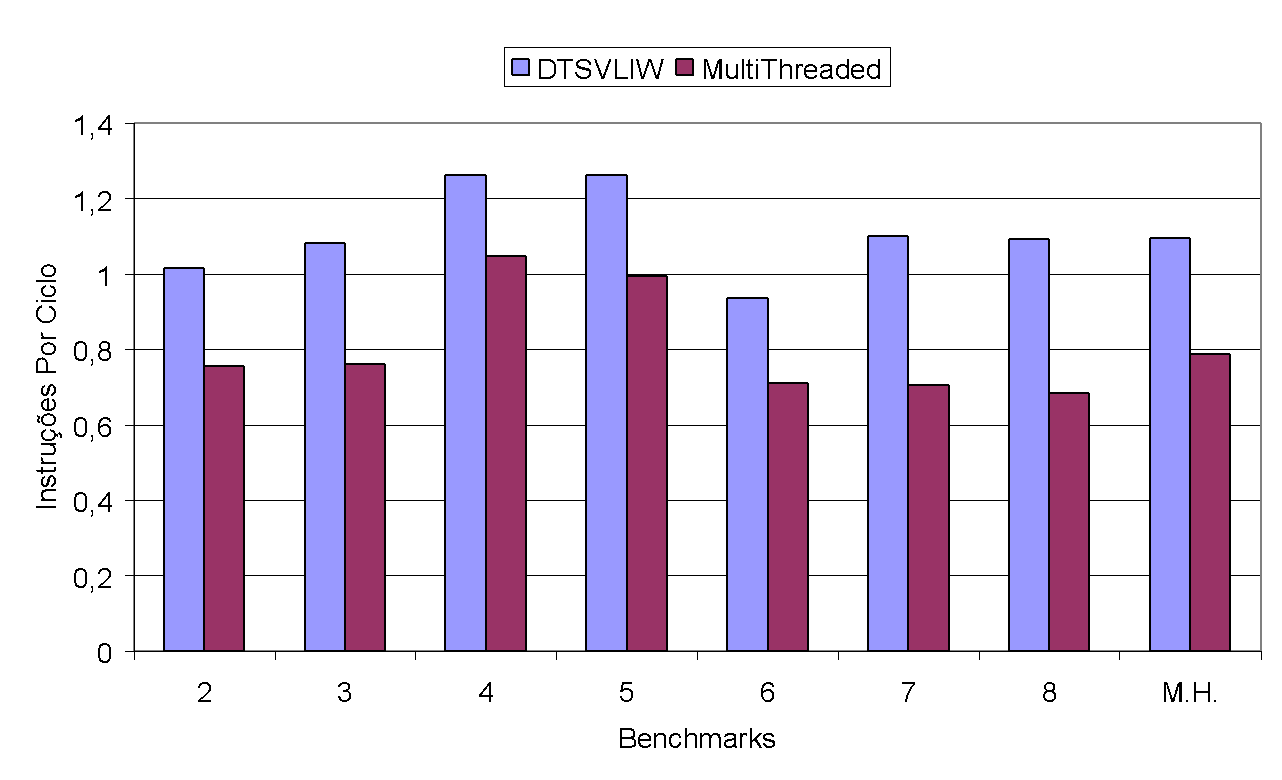

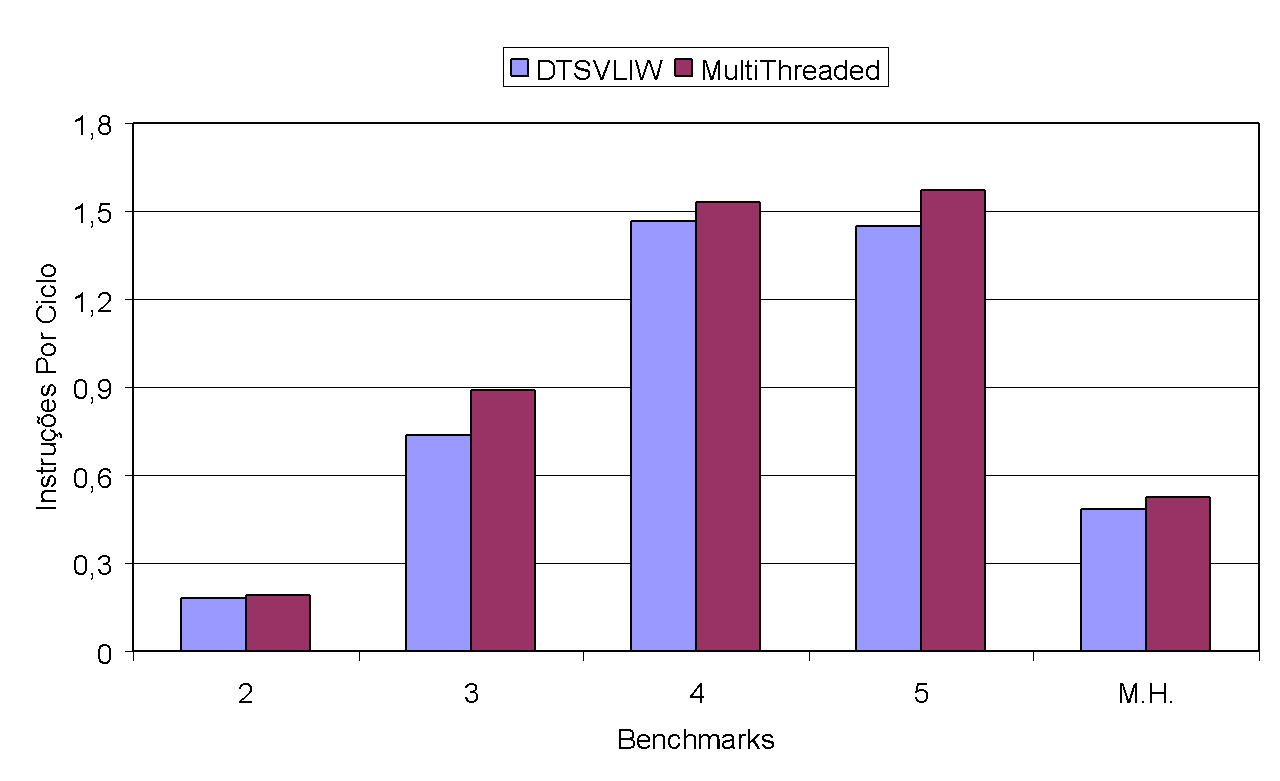

To produce the experimental results shown in Figure 26 and Figure 27, we varied the number of contexts between 2 and 8. The Multithreaded performance shown in these figures equals the sum of the number of instructions executed in each context of each experiment divided by the total number of cycles needed to execute all contexts of each experiment. The DTSVLIW performance, in turn, is the sum of the number of instructions executed by each of the programs corresponding to each context of the Multithreaded execution (executed individually up to the same point that each of them reached in the Multithreaded execution) divided by the sum of the number of cycles needed for the individual executions. The test programs used were those from SPEC2000, under conditions identical to those used in the experiments discussed previously.

As the graph in Figure 26 shows, the performance of the DTSVLIW architecture version with multiple contexts was lower than that of the version with only one context for all integer programs — a decrease of up to 37.5% (8 benchmarks). However, in the case of floating point programs (Figure 27), the performance of the DTSVLIW with multiple contexts is superior (by up to 20.5%, as in the case with 3 benchmarks) to the DTSVLIW for any number of contexts used. Upon carefully analyzing the results of the experiments conducted, we observed that, in most cases, the VLIW cache was not large enough to accommodate the number of scheduled VLIW blocks.

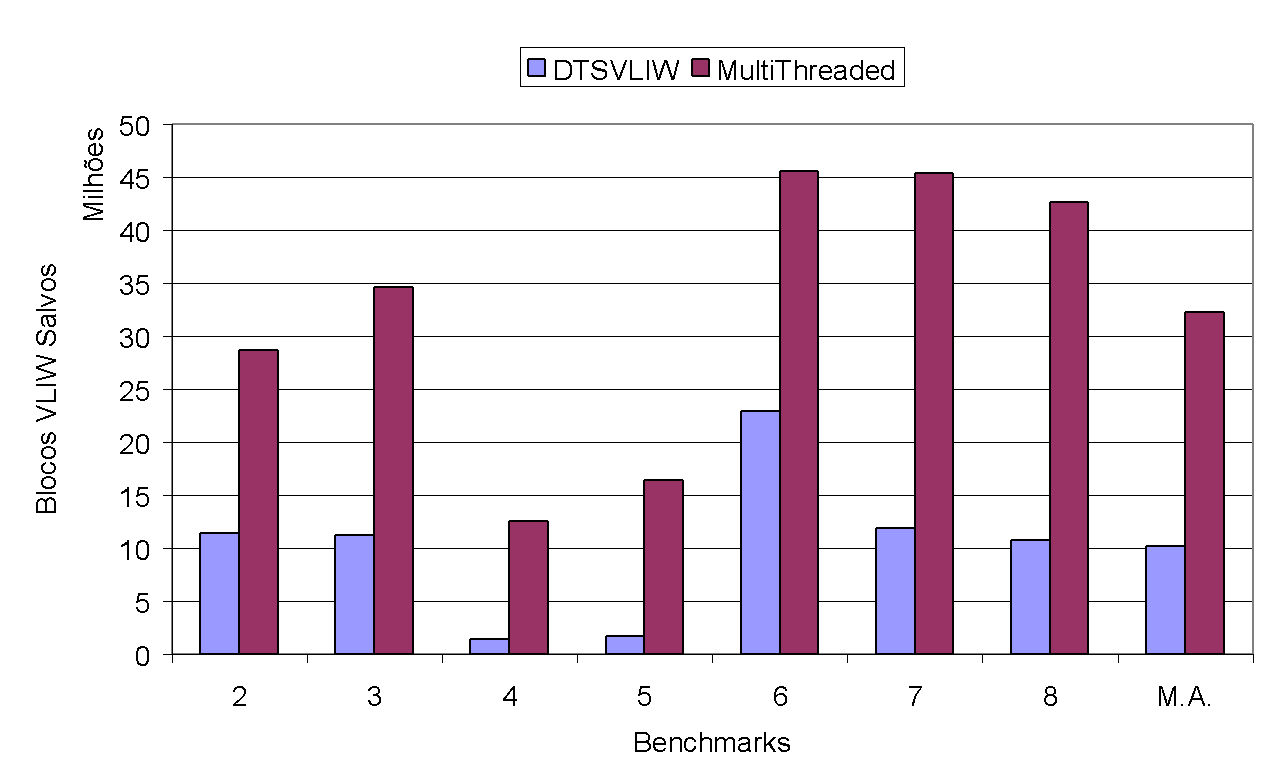

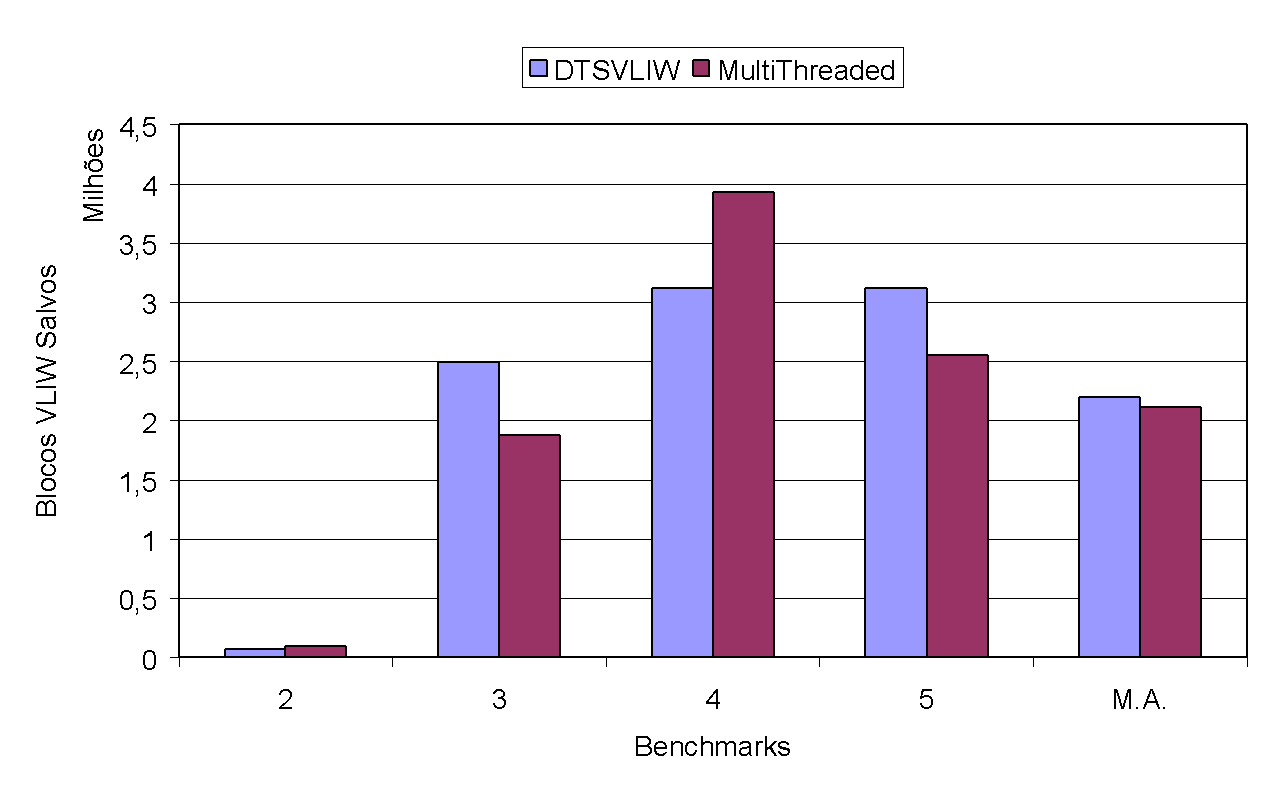

As can be seen in the graph of Figure 28, where we show the number of VLIW instruction blocks scheduled for integer programs, the DTSVLIW with multiple contexts scheduled more than triple the number of blocks compared to the DTSVLIW (in the graph, M.A. stands for arithmetic mean). The same is not observed in the case of floating point programs, as can be seen in the graph of Figure 29. Thus, it is possible to conclude that the performance difference of the DTSVLIW with multiple contexts when executing integer and floating point programs is due to the fact that integer programs, in general, repeat large code sections, which results in the scheduling of many VLIW blocks that are eventually replaced during the execution of other benchmarks subsequently, forcing the rescheduling of these blocks. On the other hand, floating point programs generally work with repetitions of small code sections that perform operations on a large data set, thus generating fewer VLIW blocks (note the order of magnitude of the graphs in Figure 28 and Figure 29) and, consequently, using less of the VLIW cache.

7.1.2. Theoretical Advances in Artificial Visual Cognition

7.1.2.1. Modeling Vergence Eye Movements

Our visual perception is egocentric, that is, centered on our position in the environment. We normally do not even notice this, but it would be terrible if visual perception were oculocentric (constructed from the eye's position relative to the environment), as the image of the world would move with eye movements. However, in an apparent paradox, the cerebral visual areas responsible for the early stages of visual perception attribute analysis have exactly this arrangement—oculocentric. Even so, when we move our eyes, we feel that the external world is stable, meaning our internal image of the external world exhibits the property of "position constancy." This is just one example of the fact that human visual cognition depends not only on physical factors (the optics of the eye) or the type of photodetector surface (the retina) of the eyes, but also on knowledge of the position of the eyes and body, and the movement of the body and head. Thus, the motor systems influence visual perception, and it is important that there be feedback from the motor systems to the visual perception system. Therefore, the oculomotor system (responsible for eye movements) plays an important role in human visual perception [25].

In this research project, we studied characteristics of the biological visual perception system and the oculomotor system. As a result, we formulated a new model for the control of the oculomotor system related to the vergence movement of the eyes toward a point in space (in three dimensions, or 3D) [26],[27],[28]. The vergence movement adjusts the position of the eyes so that the image of the same point in space is brought to both foveae (the central part of the retina).

7.1.2.2. Determining Position of a Point in Space from Vergence

The human visual system is capable of estimating the distance from the observer to a point in 3D space. Knowing this, and armed with the vergence model we developed28, we modeled a triangulation mechanism that, from a point chosen by an operator in space and the known distance between two appropriately aligned cameras, allows us to calculate the precise position of the point in 3D space. With the success of our model, we applied for and were awarded resources from the Fund for Science and Technology Support of the Municipality of Vitória (FACITEC) to develop a semi-automatic system capable of remotely measuring the volume of log piles from images captured by cameras [29]. Such a system would allow the work of measuring the wood stock of companies like Aracruz Celulose (now Fíbria), which at the time (2004) was done slowly, imprecisely, and with high risk to workers' lives—since they had to make direct contact with the wood piles to take measurements—to be done precisely, automatically, and with results available online. It is important to note, however, that once the technology was developed for this specific object of study, it could be used in other contexts. In fact, this technology was transferred to the company Mogai (www.mogai.com.br), created by former DI students, through the supervision of PPGI master's students who were Mogai employees.

7.1.2.3. Building an Internal 3D Image of the External World

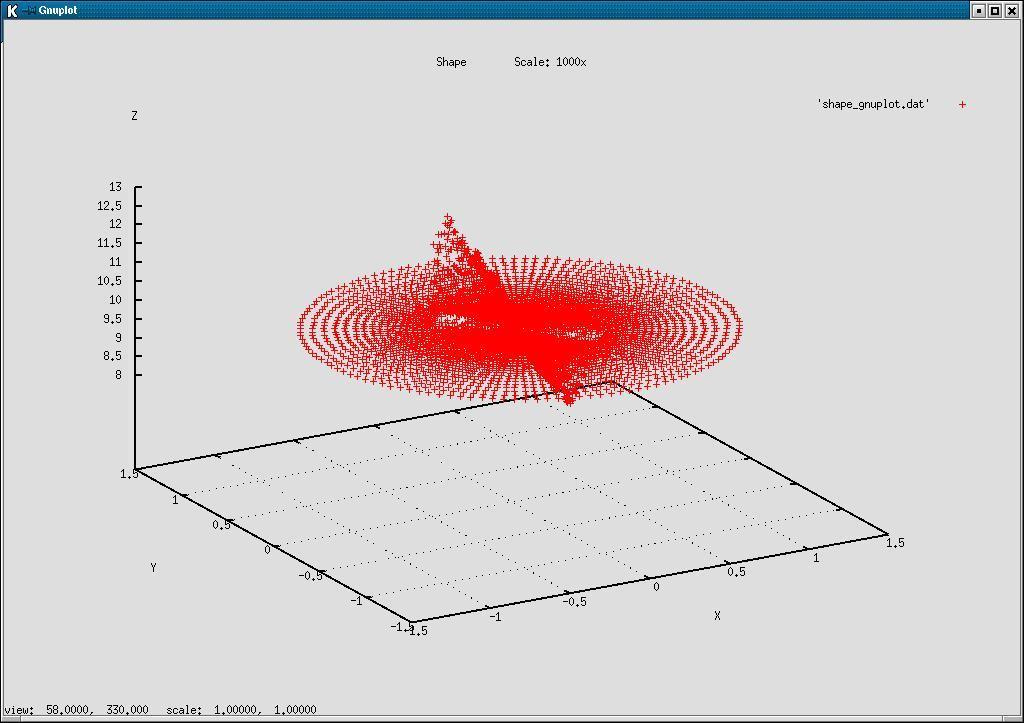

From the composition of known mathematical models of the behavior of human primary visual cortex cells with models we created of the primary visual cortex architecture, we developed a system capable of building a simplified internal representation of the external world. Figure 30(a) shows a pair of computer-generated stereo images, and Figure 30(b) shows the 3D reconstruction generated by our stereoscopic vision system when we take the center of the rod as the vergence point, that is, when our system is looking directly at the center of the rod.

The plane visualized in Figure 30(b) is due to the fact that all depths impossible to compute because of the lack of information in the image (black area in Figure 30(a)) receive a depth equal to the distance to the vergence point. The observer is located at the origin of the XY plane looking upward (in the positive Z direction), so that points above the vergence plane are farther from the observer, while points below the vergence plane are closer. Note that the central point, corresponding to the vergence point, necessarily lies on the vergence plane.

7.1.2.4. Modeling the Architecture and Response of the Primary Visual Cortex

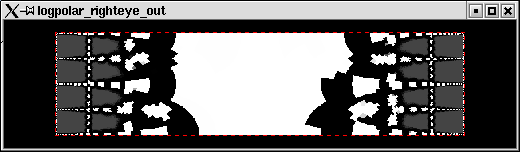

The images projected on our retinas are carried to the brain areas responsible for processing visual information through parallel visual pathways [30]. Evidence shows that each pathway is specialized in a type of information such as color, shape, and motion. At the time of this project, we were studying these pathways and formulating models to simulate the flow of visual information in the human brain. A first success of this effort was our modeling of the Retina–Primary Visual Cortex (V1) mapping [31].

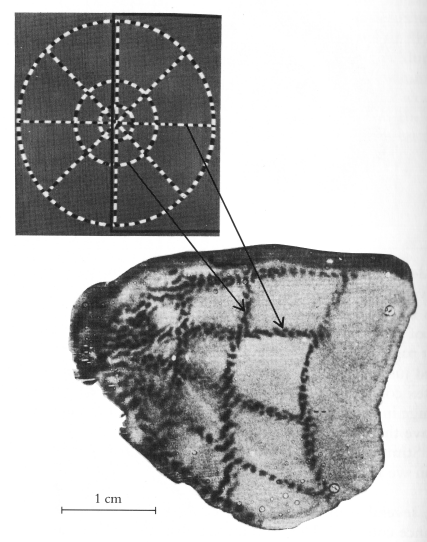

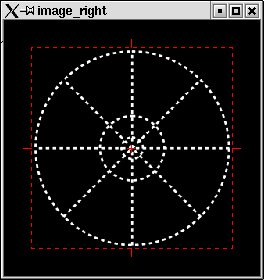

Figure 31 shows the representation of the stimulus produced in cortex V1 by an image containing concentric circles. This representation was obtained by Tootell [32] through the application of radioactive 2-deoxyglucose (an organic compound that is transferred from neuron to neuron along their interconnections) in the eye of a macaque monkey and the control of the fixation of the fovea of that eye on the center of the image. After 45 minutes, the monkey was sacrificed, and the part corresponding to V1 of the left hemisphere of the monkey's brain was cut out, flattened, and placed over a radiation-sensitive photographic film that, when developed, showed the image in Figure 31.

Figure 32 shows the result produced by our V1 model from an input image equivalent to the one used by Tootell. Unlike Tootell's experiment, where only one hemisphere is shown, in the image representing the output of our model, Figure 31(a), both hemispheres are shown. As can be seen in the left part of Figure 31(b), our model produces results quite close to those observed in the biological visual system.

(a)

(b)

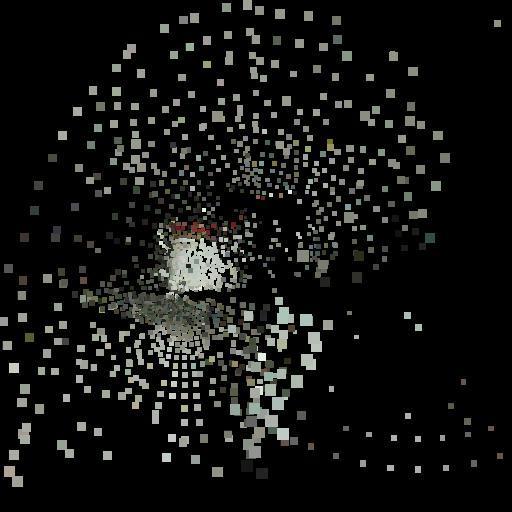

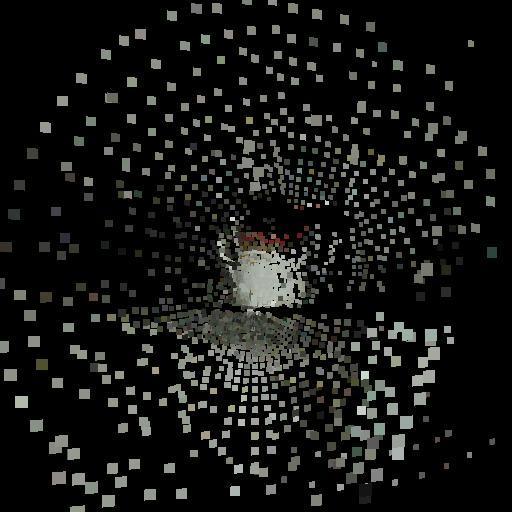

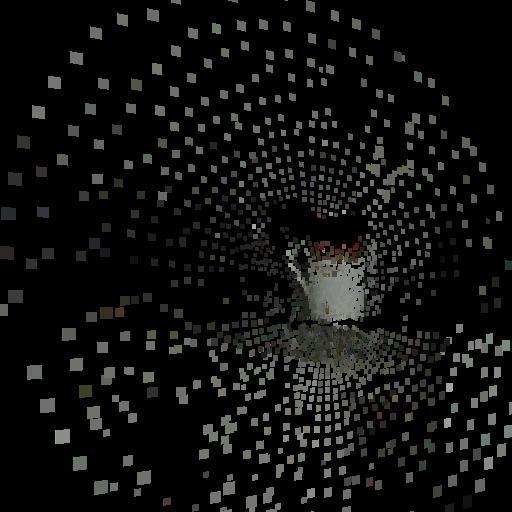

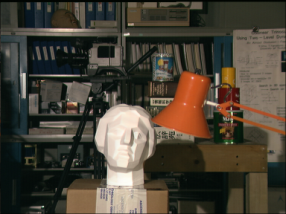

Using our Retina–V1 mapping model and our models of V1 cells sensitive to the difference between the images captured by the left and right eyes, we were able to formulate an internal computer representation of the 3D image of the external world centered on the vergence point of the eyes. Figure 33 shows the result of applying our model in a situation where the vergence point is the mug shown in the figure.

In Figure 33(a), the images captured by the left and right cameras are shown, using a separation between cameras approximately equal to the separation between human eyes (6.5 cm). In Figure 33(b), we present the result of translating the internal computer representation (several planes of cells like the one in Figure 32(b), not shown here) back into a 3D space, from three different angles (without altering the input images, which are the same as in Figure 33(a)). As can be observed in Figure 33, the representation at the vergence point is more detailed, as in the human case (when we look at a specific point, we do not see the surrounding points sharply—try reading the word at the beginning of this paragraph while fixing your gaze on the period that ends it).

(a)

(b)

7.1.3. Technology Development

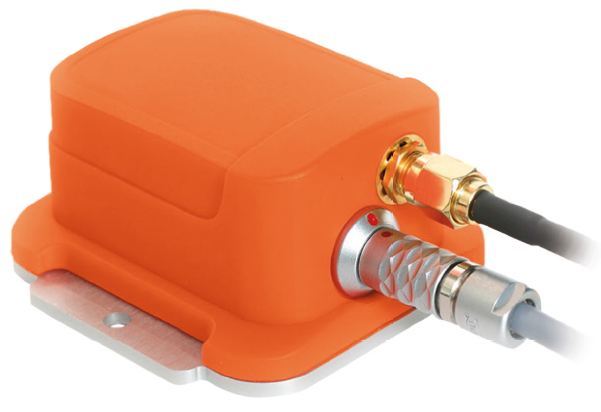

To generate parallel versions of existing programs capable of exploiting the computational capacity of clusters, or to write new parallel programs with this capability, powerful tools for analysis and debugging of parallel code are required [33]. These tools allow detecting imbalances in the distribution of the computational load among the various computers in the cluster, in addition to indicating how time is being distributed between tasks inherent to cluster processing, such as message passing and synchronization, and useful computation. A fundamental component of all parallel code analysis and debugging tools is the global clock33. Global clocks allow mapping in time the relevant events of a computation. With such a mapping, it is possible to identify how the various processors of a cluster are being utilized, identify bottlenecks, distribute the load, and thus produce efficient parallel code. During the two years of this project, we developed, together with COPPE/UFRJ-Sistemas, a prototype and two patents for a sub-microsecond precision global clock implemented in hardware for clusters [34],[35].

7.1.4. Consolidation of Our Local Research Group

In 1997, we founded the high-performance computing research group at UFES. During the two years of this project (2003–2004), we consolidated the group with the creation of the High-Performance Computing Laboratory (www.lcad.inf.ufes.br).

7.1.5. Improvement of Local Research Infrastructure

In 2003, the Enterprise Cluster of the High-Performance Computing Laboratory at UFES became operational13. This cluster has 65 processors and in June 2004 was ranked 53rd on clusters.top500.org.

7.2. PQ Project 2005–2007 – Computer Architectures and Advanced Memory Hierarchies

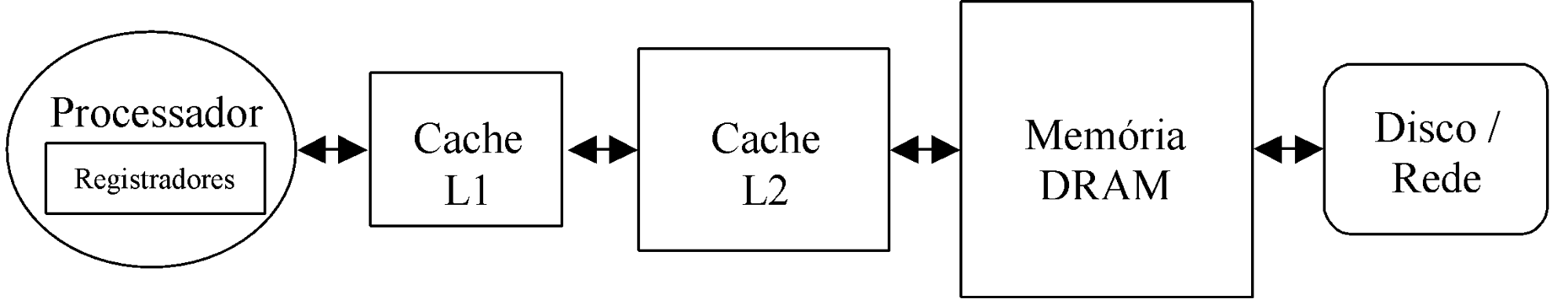

To make a large amount of memory available to programmers and allow access to this memory at high speed and low cost, a hierarchy of different types of memory is currently used (Figure 34).

At one end of the hierarchy, inside the processor, registers constitute the primary source of data and instructions. When the data and/or instruction needed for computation are not in its internal registers, the processor requests them from the Level 1 (L1) Cache(s). If they are not in L1, L2 is examined, and so on until the disk, network, or another storage or interconnection device provides the requested data or instructions (there are systems with a different number of cache levels). Typically, starting from the registers, the entire hierarchy can be treated by the programmer as a single memory with a size equal to the maximum allowed by the Instruction Set Architecture (ISA), with hardware and software structures associated with L1, L2, and the DRAM-Disk/Network/etc. interface handling the movement of data and instructions between the processor and the memory hierarchy.

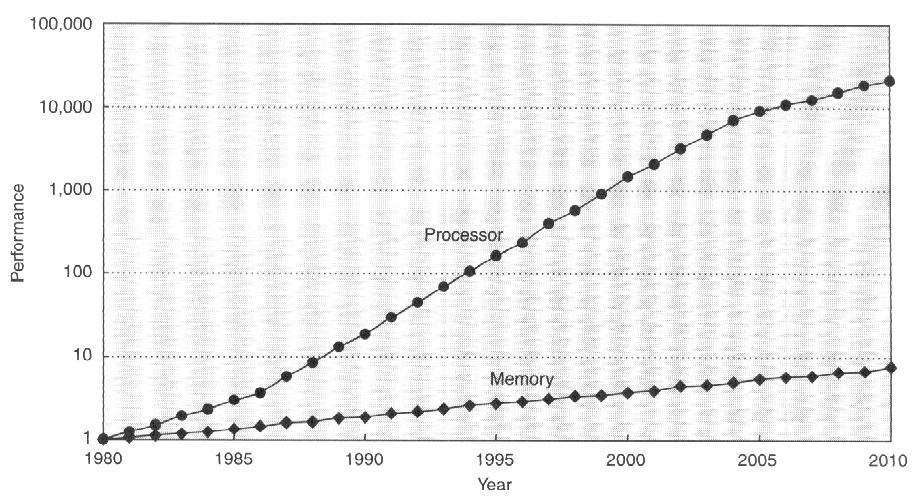

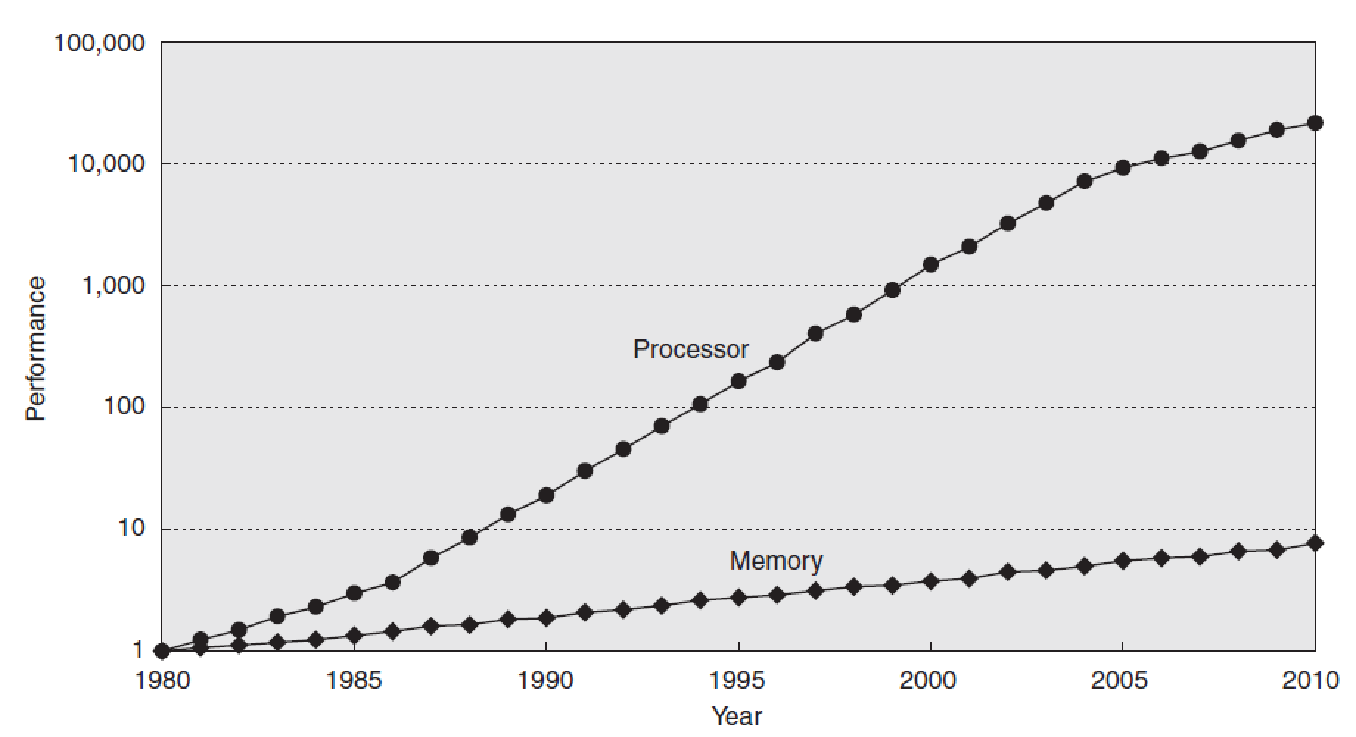

Typically, the L1 cache is small (tens of Kbytes) and operates at processor speed, while the L2 cache is on the order of tens of times larger and tens of times slower than L1 (access time equal to approximately 10 processor cycles). Today, the latency of accessing DRAM memory (or main memory) is some hundreds of times greater than the processor cycle time. This speed difference tends to increase rapidly in the near future due to the observed difference between the evolution of processor and main memory performance. This is illustrated by Figure 2, which shows the evolution of processor (Processor) and memory (Memory) performance from 1980 to 2010 (estimate) – in 1980, processor and memory performance are presented as equal because the computers (based on microprocessors) of that time did not have cache and accessed main memory directly [36].

As shown in Figure 35, the evolution of processor performance (single core) has been significantly more pronounced than that of memory performance – in many-core systems[37], the performance gap tends to grow at an even higher rate. This fact imposes a continued research effort to reduce the effect of memory latency on the performance of computational systems.

In this research project, we investigated alternatives for the dynamic exploitation of opportunities to reduce the effect of latency on the performance of computational systems in three contexts: (i) processor architectures; (ii) memory hierarchy architectures; and (iii) synchronization mechanisms. In the context of processor architectures, we extended the Dynamically Trace Scheduling VLIW (DTSVLIW) architecture to support multiple execution contexts; we also developed a new architecture that executes code in two modes, a sequential one and a dataflow one, called Dynamically Trace Scheduling Dataflow (DTSD). In the memory hierarchy context, we developed a new cache architecture that takes advantage of virtual memory concepts to reduce misses. In the synchronization systems context, we developed an innovative hardware device for thread synchronization in clusters: i.e., a system to provide barrier and lock primitives.

In addition to the research work discussed above, in this project we supervised undergraduate, master's, and doctoral students in other topics related to advanced processor architectures, high-performance computing, and cognitive science, areas of great interest to students in the Master's program in Computer Science and the Master's and Doctoral programs in Electrical Engineering at UFES.

7.2.1. Research Activities Developed in the Project

In 1998, we proposed the Dynamically Trace Scheduling VLIW (DTSVLIW) architecture9,10. This architecture uses the execution locality observed in code to exploit instruction-level parallelism. In our studies, we found that the DTSVLIW suffers more from the effects of memory hierarchy latency than the Superscalar architecture19. However, without these effects, the DTSVLIW would have better performance than the Superscalar, both in terms of ILP exploitation and energy efficiency (energy consumption per instruction)14. The observation of this impact of memory hierarchy latency on DTSVLIW performance led us to pursue two paths: the development of new processor architectures based on the DTSVLIW that are less sensitive to memory latency; and the development of new memory hierarchy architectures.

Within the first path mentioned, we proposed a DTSVLIW with multiple execution contexts (a multithreaded DTSVLIW)22,[38],[39] – in this new architecture, when a thread needs to wait for a memory access, the hardware it occupies can be yielded to another thread, improving the architecture's throughput. We also proposed a new architecture that executes code in two distinct modes, a scalar one and a dataflow one, which we called Dynamically Trace Scheduling Dataflow (DTSD) [40]. In the DTSD architecture, scalar instructions are fetched, one at a time, from the instruction cache and executed by a simple pipelined processor – i.e., the architecture's Primary Processor (Figure 36). The Scheduling Unit performs the dynamic dataflow scheduling of the path produced by the execution of these scalar instructions, thereby assembling blocks of dataflow instructions; these blocks are stored in the dataflow instruction block cache. If the same code segment needs to be executed again, the instructions from this segment can be provided by the dataflow cache and executed by the Dataflow Machine in Figure 36. The DTSD takes advantage of both the characteristics of the DTSVLIW10 and other architectures [41],[42]. Thanks to the dataflow execution mode, the negative effect caused by memory hierarchy latency is reduced, since memory access instructions will only block the execution of other instructions that depend on them, and not the execution of all instructions, as in the case of the DTSVLIW architecture.

Within the second path mentioned, we proposed a new cache memory architecture inspired by the fact that the size and latency of the last-level cache (typically level 2, or L2) are making the L2-memory interface very similar to the memory-disk interface in systems with virtual memory. In this research work, we took advantage of some concepts used in virtual memory systems to implement a new type of cache capable of reducing the impact of memory hierarchy latency and energy consumption, which we called Dynamic Block Remapping Cache (DBRC) [43].

In virtual memory systems, main memory is used as a cache for the disk. For this, the virtual memory space is divided into regions with a certain number of bytes called pages, which can be mapped to main memory, which is also divided into pages. Virtual memory pages and main memory pages, or physical memory pages, are numbered from zero to the maximum number allowed by the size of each of these memories. A hierarchy of tables allows mapping virtual memory pages to physical memory pages. When the computational system needs to access a virtual page, this table hierarchy is examined using the virtual page number as an index. If the virtual page is already mapped to a physical page, the physical page number is retrieved from the table hierarchy and can be used for access36. That is, the table hierarchy stores the translation of virtual page numbers to physical page numbers, being designed to allow any virtual page to be mapped to any physical page – this makes virtual memory systems fully associative disk caches.

A fully associative organization allows sophisticated global page replacement algorithms (which, at the time of a replacement, consider all virtual memory pages allocated in physical memory), contributing to higher hit rates. Once performed, a virtual-to-physical page translation is stored in a small translation cache (Translation Look-aside Buffer - TLB36). Subsequent accesses can find the translation in the TLB and avoid the costs associated with a table hierarchy lookup.

Similarly to the approach used in virtual memory systems, which use a table hierarchy (part of it stored on the disk itself) to map virtual memory pages to physical memory pages, the DBRC uses a table hierarchy to map physical memory blocks to L2 cache blocks43. Most of this table hierarchy is stored in L2 itself and, as in virtual memory systems, a block TLB is used to speed up access to previously performed translations. Thanks to its table hierarchy, the DBRC is fully associative and, although translations may require several clock cycles, they are infrequent. The benefits brought by full associativity and the use of global replacement algorithms implemented in hardware result in higher hit rates and lower energy consumption than conventional caches.

In the area of parallel computational systems programming, we developed or collaborated in the development of several parallel algorithms for solving computational fluid mechanics problems on computer clusters [44],[45],[46],[47],[48],[49],[50],[51],[52].

7.2.2. Other Research and Teaching Activities

In addition to the activities described so far, we also carried out other teaching and research activities in the areas of high-performance computing and cognitive science.

7.2.2.1. High-Performance Computing

Due to the need for computational resources to conduct experiments (processor architecture simulation demands great computational capacity), I joined other colleagues from the Computer Science and Environmental Engineering departments at UFES, and together we founded a High-Performance Computing research group. The actions of this group resulted in obtaining resources from the National Petroleum Agency to build a cluster of 65 processors. With resources granted by FINEP (CT-INFRA 2005), we imported 70 additional machines (35 of them quad-core and 35 dual-core) and two gigabit switches. Thus, in a period of 5 years (from 2003 to 2008), we went from 65 processors to 210. The Enterprise Cluster has been an important research tool for several researchers at UFES and at other universities in the country.

I also developed, during the period of this project, a fruitful cooperation with Prof. Cláudio Amorim, from COPPE/UFRJ-Sistemas, in the area of High-Performance Computing. This cooperation resulted in the development of a synchronization system to provide barrier and lock primitives in clusters [53].

7.2.2.2. Cognitive Science

There is great demand for supervision in the area of cognitive science in the Master's program in Computer Science, and in the Master's and Doctoral programs in Electrical Engineering at UFES. For this reason, I served as advisor for several research works in this area. The results of these works led to the development of research projects that received significant funding, as described below.

7.2.3. Other Research Projects and Relevant Achievements of the 2005–2007 Period

In this section, we briefly present other research projects in which we participated. We list only the approved projects, with disbursement of resources, in which we served as coordinator. During this period, we were responsible for research investments totaling R$ 4,196,408.00.

In this section, we also list other relevant achievements to which we contributed and that are directly or indirectly related to science, technology, and innovation.

7.2.3.1. Projects

- Automatic Classification in CNAE-Subclass (2006–2009)

- Description: The National Classification of Economic Activities (CNAE) is a hierarchical table of activities and associated codes, and its most detailed level, CNAE-Subclasses, is used as an instrument for national standardization of economic activity codes used by various public agencies of direct administration in the management and control of actions at each level of government (federal, state, or municipal). In public administration registries, CNAE-Subclass codes are assigned to all economic agents engaged in the production of goods and services, and at the Federal Revenue Service, one or more CNAE-Subclass codes must be provided when registering a new legal entity (when registering a CNPJ) or when modifying its constitutive acts. Currently, the selection and assignment of CNAE-Subclass codes is done manually by the informant themselves or by trained human coders supported by computational search tools in the CNAE-Subclass table, made available by the Brazilian Institute of Geography and Statistics (IBGE). The main objective of this project is to develop a prototype of a Computational System for the Automatic Coding of Economic Activities – SCAE. The SCAE will receive as input textual descriptions of economic activities and will produce as output the descriptors of the economic agent's activities and their respective CNAE-Subclass codes. To this end, the SCAE will generate internal system representations of the CNAE table and of the activities of the economic agent for which CNAE-Subclass codes are to be assigned for administrative use. These representations must be such that they allow identifying the correct semantic correspondence between the free textual description of the economic agent's activities and one or more items of the CNAE-Subclass table descriptors. Three techniques will be used for this internal representation: Artificial Neural Networks, Bayesian Networks, and Latent Semantic Indexing. The SCAE will also produce a certainty measure for each code and can be programmed to engage a human operator in case a certainty measure below a certain level is obtained. The coding of the establishment, obtained through SCAE-Subclass, must be exhaustive and sufficient for the identification of the economic agent's main activity, according to the pertinent rules.

- Resources: R$ 2,613,500.00 (Federal Revenue Service)

- High-Speed Metropolitan Network of Vitória – Metrovix (2005– )

- Description: The Metropolitan Network for Education and Research (REDECOMEP) initiative is part of a broader action by the Ministry of Science and Technology (MCT), and aims to deploy high-speed networks in the country's metropolitan regions served by Points of Presence of the National Research and Education Network (RNP). The initiative, coordinated by RNP, is based on the premise of deploying a proprietary optical infrastructure, interconnecting research and higher education institutions. The network deployment model provides for the construction of entirely new infrastructure and/or the use of existing ducts, cables, and optical fibers through concession of usage rights and partnerships. The Metrovix Network, which has 52.543 km of laid fiber in length, is the result of a partnership involving: the Federal Center for Technological Education of Espírito Santo; the Higher School of Sciences of Santa Casa de Misericórdia de Vitória; the Hospital of Santa Casa de Misericórdia de Vitória; the Capixaba Institute for Research, Technical Assistance, and Rural Extension; the Solar Monjardim Museum; the Municipality of Vitória; the National Research and Education Network; the State Secretariat of Science and Technology of Espírito Santo; and the Federal University of Espírito Santo. It was launched by the Minister of Science and Technology, Sergio Rezende, on August 23, 2005, and was inaugurated on August 27, 2007.

- Resources: R$ 1,103,048.00 (MCT/FINEP/RNP)

- Modernization of the Research Infrastructure in the High-Performance Computing Area at UFES (2005–2008)

- Description: This project sought to improve the infrastructure of the Enterprise Cluster at LCAD - DI/UFES (www.lcad.inf.ufes.br). Through it, 70 machines (35 quad-core and 35 dual-core) and two gigabit switches were acquired via importation, which together will be part of the new Enterprise Cluster, currently under construction.

- Resources: R$ 270,000.00 (FINEP, CT-INFRA)

- Strengthening the High-Performance Computing and Computational Intelligence Areas of the Graduate Program in Computer Science at UFES (2007–2009)

- Description: The central objective of this project is to strengthen and increase the interactions between the Computational Intelligence and High-Performance Computing research lines of the Graduate Program in Computer Science at UFES, counting on the support of research groups from already consolidated graduate programs at COPPE/UFRJ and USP/São Carlos. The COPPE/UFRJ research group will primarily support and interact with the researchers in the High-Performance Computing line of the non-consolidated program, while the USP/São Carlos research group will interact with and support the researchers in the Computational Intelligence line. In the High-Performance Computing line, joint works will be carried out between the UFES and COPPE teams on the following topics: (i) new programming paradigms suitable for future parallel systems with tens or hundreds of processors implemented through multi-core integrated circuits; (ii) study and parallel implementation of new adaptive techniques, in time and space, for representing finite element mesh configurations applied to flow problems in porous media. In the Computational Intelligence line, work will be carried out in the area of knowledge discovery in databases, involving data mining, pattern recognition, and machine learning techniques. Specifically, studies will be conducted on the following topics: (i) feature selection; (ii) adaptations of classical classification techniques to the case of data with temporal variables; (iii) integration of symbolic classifiers using multi-agent systems. The integrating element of the research work to be carried out in this project will be the study and development of techniques, algorithms, methodologies, hardware, and software for the application of parallel computing in the learning stage of data mining processes, aiming to make these processes more effective.

- Resources: R$ 169,000.00 (CNPq, Casadinho)

- Automating the Measurement of Dimensions, Areas, and Volumes (2006–2007)

- Description: In this research and technology development work, the visual perception system that allows humans to mentally form a 3D image of the external world was studied. The proposal was the implementation of an artificial binocular vision system capable of emulating a restricted part of the biological visual system related to perception attributes involved with 3D vision. In the implementation, information from images captured by cameras was used to control the process of locating points of interest in these images. This control is achieved through signals generated by an artificial neural network that receives as input images pre-processed by filters. With a focus of attention of interest, other parts of the system work on constructing the 3D model and on image recognition, which allows the evaluation of characteristics of objects in the field of vision, such as: dimensions, surface areas, and volumes.

- Resources: R$ 21,100.00 (FAPES)

- New High-Performance Architectures Based on Dynamic Instruction Scheduling (2005–2007)

- Description: In this research work, we investigated new mechanisms for dynamic detection (during code execution) of opportunities for scheduling instructions for parallel execution that are not strongly affected by memory hierarchy latency. The objective of the project was to investigate mechanisms that would allow dynamic translation, via hardware, of scalar code from existing instruction set architectures to EDGE (Explicit Data Graph Execution) code, for subsequent execution on an EDGE machine also dynamically. For this, we used our experience with the DTSVLIW architecture which, in a manner equivalent to what we proposed to investigate, translates scalar code to VLIW code and subsequently executes this code in VLIW mode, dynamically. We used an experimental approach in this investigation. For this, we made use of publicly available open-source instruction set architecture simulation environments, in addition to our own simulators.

- Resources: R$ 19,760.00 (CNPq, Universal)

7.2.3.2. Other Relevant Achievements of the Period

- In 2005, we coordinated the development of the 2005–2010 Strategic Plan of UFES and, in 2006, of the Institutional Pedagogical Project and the Information and Communication Technologies Master Plan of the University.

- In 2005, we were appointed as a standing member of the Steering Committee of the SBC/IEEE International Symposium on Computer Architecture and High Performance Computing.

- In 2007, we served as guest editor of the International Journal of Parallel Programming for the special issue on the 18th SBC/IEEE International Symposium on Computer Architecture and High Performance Computing.

- In March 2007, we became a collaborating member of the Graduate Program in Electrical Engineering (PPGEE) at UFES. This allowed us to change from the status of co-advisor to advisor of the doctoral student Fábio Daros de Freitas.

- In 2006, we served as program committee coordinator of the 18th SBC/IEEE International Symposium on Computer Architecture and High Performance Computing.

- In 2005, we served as co-coordinator of the program committee for the High-Performance Computing section of the XXVI CILAMCE.

- During the entire period of this project (2005 to 2007), we served as a member of the program committee of the SBC/IEEE International Symposium on Computer Architecture and High Performance Computing, and as a member of the program committee of the SBC Workshop on High-Performance Computing Systems.

- During the entire period of this project (2005 to 2007), we served as chair of the Management Committee of the High-Speed Metropolitan Network of Vitória – Metrovix.

7.3. PQ Project 2008–2010 — Many-Core Architectures and Computational Intelligence

Until the mid-2000s, the trend in the processor industry was to use the additional transistors provided by "Moore's Law" [54],[55] to implement Integrated Circuits (ICs) containing computational systems (processor, its caches, etc.) with a single, increasingly powerful processor. However, three obstacles prevented the continuation of this trend: (i) power consumption and the consequent need for heat dissipation from the high-frequency switching of an ever-increasing number of transistors; (ii) the growing latency of the memory hierarchy; and (iii) the difficulties associated with further exploitation of instruction-level parallelism (Figure 37).